Sparse Autoencoders Learn Monosemantic Features in Vision-Language Models

Mateusz Pach, Shyamgopal Karthik, Quentin Bouniot, Serge Belongie, Zeynep Akata

2025-04-04

Summary

This paper is about improving how AI models that understand both images and text can be understood and controlled.

What's the problem?

It's hard to know exactly what these AI models are learning and how to control their behavior.

What's the solution?

The researchers used a technique called Sparse Autoencoders to make the AI models learn more distinct features, making them easier to understand and control.

Why it matters?

This work matters because it can help us build more reliable and controllable AI systems that combine vision and language.

Abstract

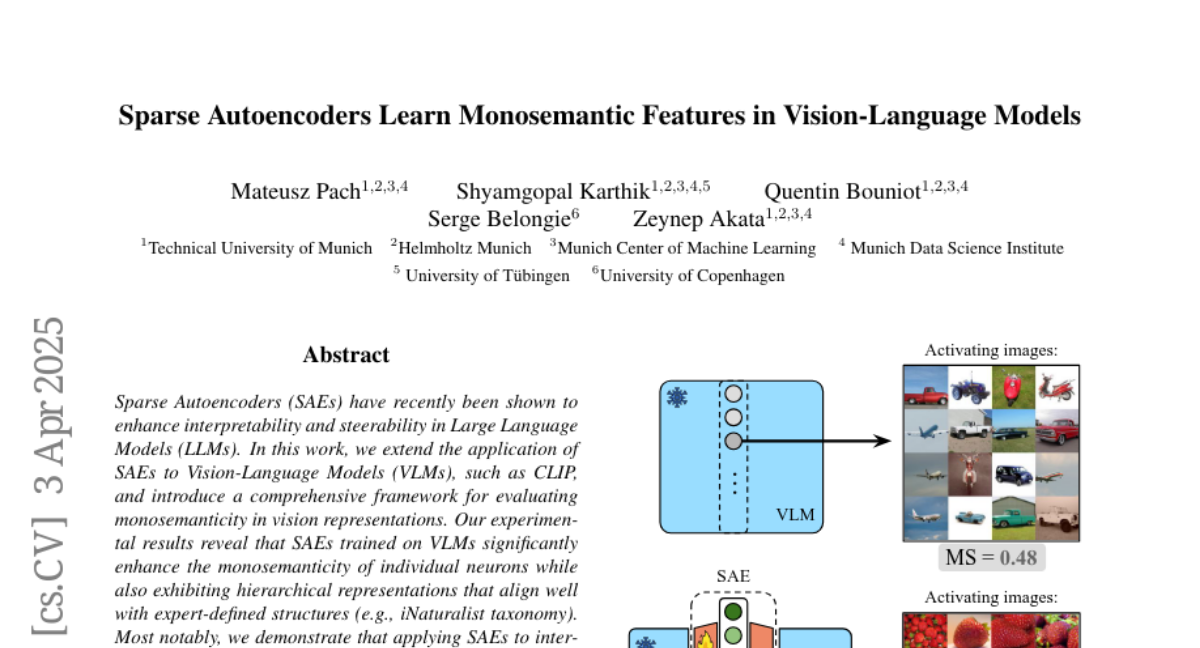

Sparse Autoencoders (SAEs) have recently been shown to enhance interpretability and steerability in Large Language Models (LLMs). In this work, we extend the application of SAEs to Vision-Language Models (VLMs), such as CLIP, and introduce a comprehensive framework for evaluating monosemanticity in vision representations. Our experimental results reveal that SAEs trained on VLMs significantly enhance the monosemanticity of individual neurons while also exhibiting hierarchical representations that align well with expert-defined structures (e.g., iNaturalist taxonomy). Most notably, we demonstrate that applying SAEs to intervene on a CLIP vision encoder, directly steer output from multimodal LLMs (e.g., LLaVA) without any modifications to the underlying model. These findings emphasize the practicality and efficacy of SAEs as an unsupervised approach for enhancing both the interpretability and control of VLMs.