Sparse VideoGen2: Accelerate Video Generation with Sparse Attention via Semantic-Aware Permutation

Shuo Yang, Haocheng Xi, Yilong Zhao, Muyang Li, Jintao Zhang, Han Cai, Yujun Lin, Xiuyu Li, Chenfeng Xu, Kelly Peng, Jianfei Chen, Song Han, Kurt Keutzer, Ion Stoica

2025-05-28

Summary

This paper talks about a new method called Sparse VideoGen2, or SVG2, that helps computers create videos faster and with better quality by focusing only on the most important parts of the information.

What's the problem?

The problem is that making videos with AI usually takes a lot of time and computer power because the models have to process huge amounts of data, much of which isn't actually that important for the final result.

What's the solution?

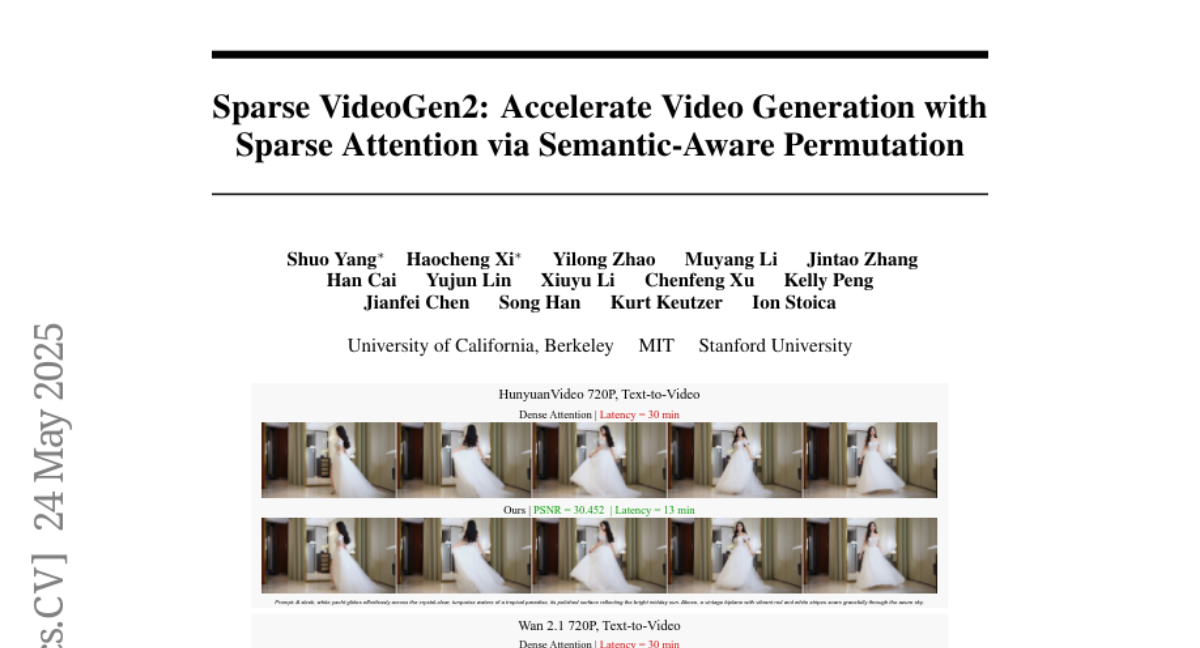

The researchers designed SVG2 to pick out and pay attention to just the key pieces of information, using something called semantic-aware permutation and a way to control how much computer power is used at each step. This lets the system make videos more quickly and without needing extra training.

Why it matters?

This matters because it makes video creation with AI much more efficient, saving time and energy, and could help bring high-quality video generation to more people and applications, like movies, education, and entertainment.

Abstract

SVG2 is a training-free framework that enhances video generation efficiency and quality by accurately identifying and processing critical tokens using semantic-aware permutation and dynamic budget control.