Speechless: Speech Instruction Training Without Speech for Low Resource Languages

Alan Dao, Dinh Bach Vu, Huy Hoang Ha, Tuan Le Duc Anh, Shreyas Gopal, Yue Heng Yeo, Warren Keng Hoong Low, Eng Siong Chng, Jia Qi Yip

2025-05-26

Summary

This paper talks about a new way to train language models to understand spoken instructions in languages that don't have a lot of speech data or fancy text-to-speech systems.

What's the problem?

The problem is that many languages around the world don't have enough recorded speech or the technology needed to teach AI how to understand spoken instructions, which leaves speakers of those languages behind in voice technology.

What's the solution?

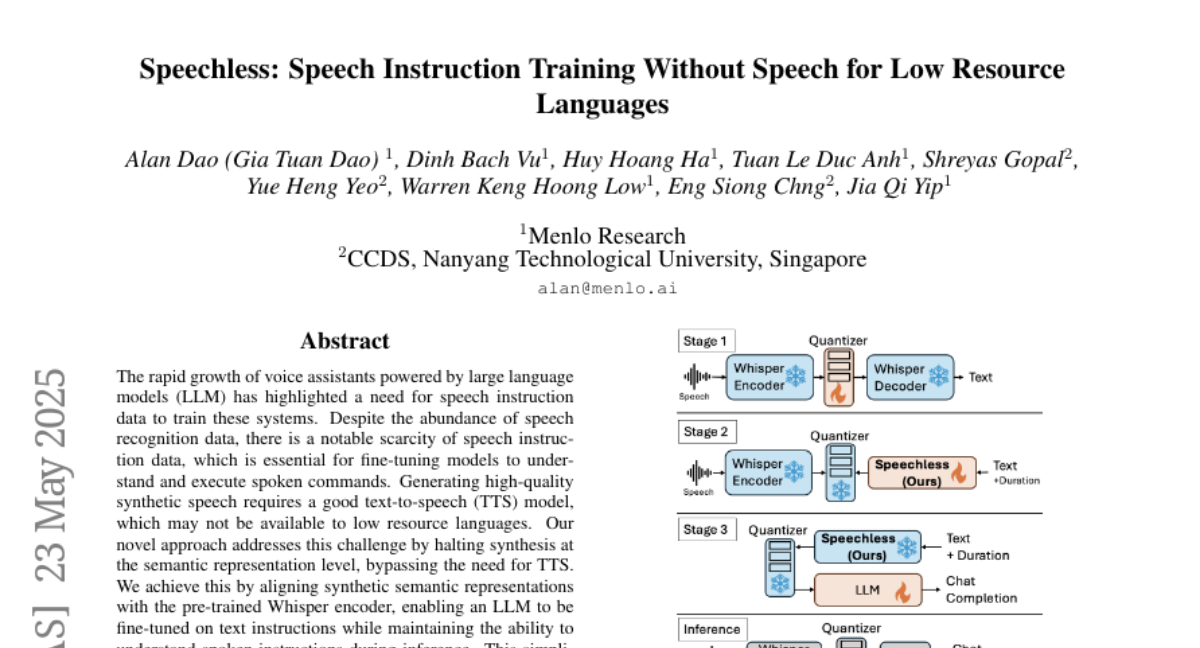

The researchers found a way to skip the need for text-to-speech models by matching the meaning of words and sentences directly with a special speech encoder called Whisper. This lets the language models learn to understand both written and spoken instructions, even for languages with very little audio data.

Why it matters?

This is important because it helps bring voice technology to many more people, making it possible for speakers of less common languages to use AI tools that understand their spoken instructions, which supports language diversity and digital inclusion.

Abstract

A method bypasses the need for TTS models by aligning semantic representations with a Whisper encoder, enabling LLMs to understand both text and spoken instructions for low-resource languages.