Steering Conceptual Bias via Transformer Latent-Subspace Activation

Vansh Sharma, Venkat Raman

2025-06-24

Summary

This paper talks about a new method that helps guide large language models to generate scientific code in a specific programming language by activating certain parts of the model’s internal memory called latent subspaces.

What's the problem?

The problem is that language models often show a bias towards generating code in some programming languages over others, and it's hard to control which language they produce reliably.

What's the solution?

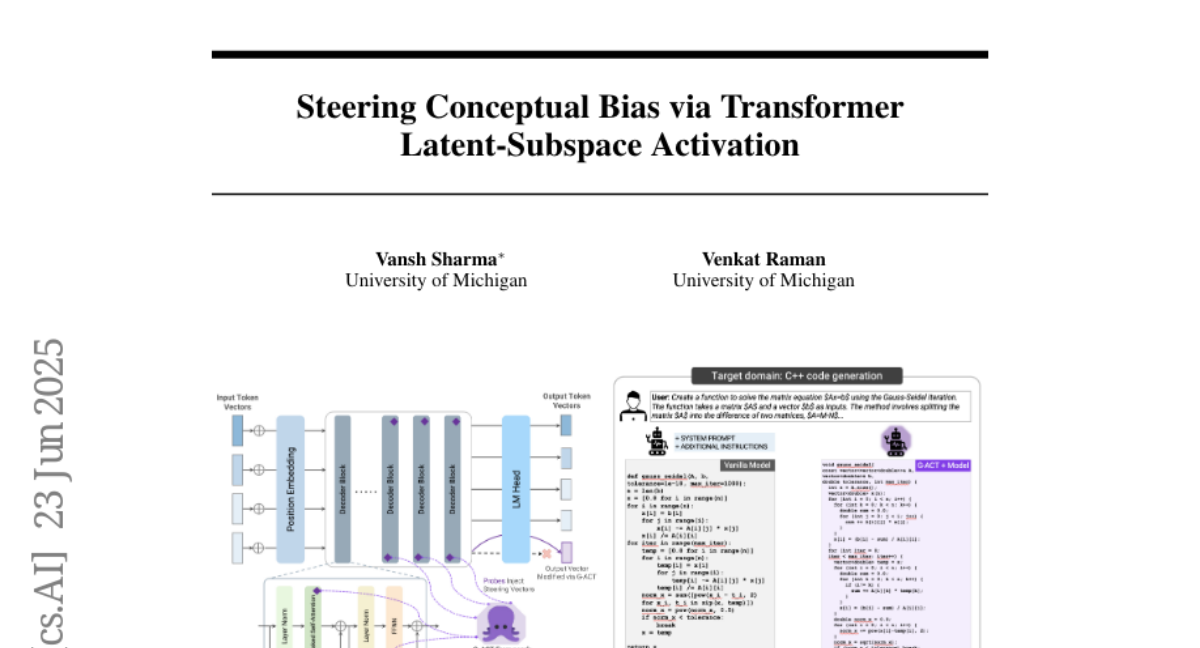

The researchers created a framework that adaptively adjusts which latent subspaces inside the model are activated during generation. This steering method uses gradient refinement to better control the output language, improving how accurately the model sticks to a chosen programming language.

Why it matters?

This matters because it makes AI models more useful and reliable when generating code, especially for scientific and technical tasks where specific programming languages are needed, helping developers save time and reduce errors.

Abstract

A gradient-refined adaptive activation steering framework enables LLMs to reliably generate scientific code in a specific programming language by selectively steering latent subspaces.