Steering Large Language Models for Machine Translation Personalization

Daniel Scalena, Gabriele Sarti, Arianna Bisazza, Elisabetta Fersini, Malvina Nissim

2025-05-23

Summary

This paper talks about new ways to make large language models better at translating text in a way that matches a person's unique style or needs, even when there isn't much data available for that language or person.

What's the problem?

The problem is that machine translation usually gives everyone the same kind of translation, which doesn't work well for people who want translations to fit their own style or special requirements, especially when there isn't a lot of data to train on.

What's the solution?

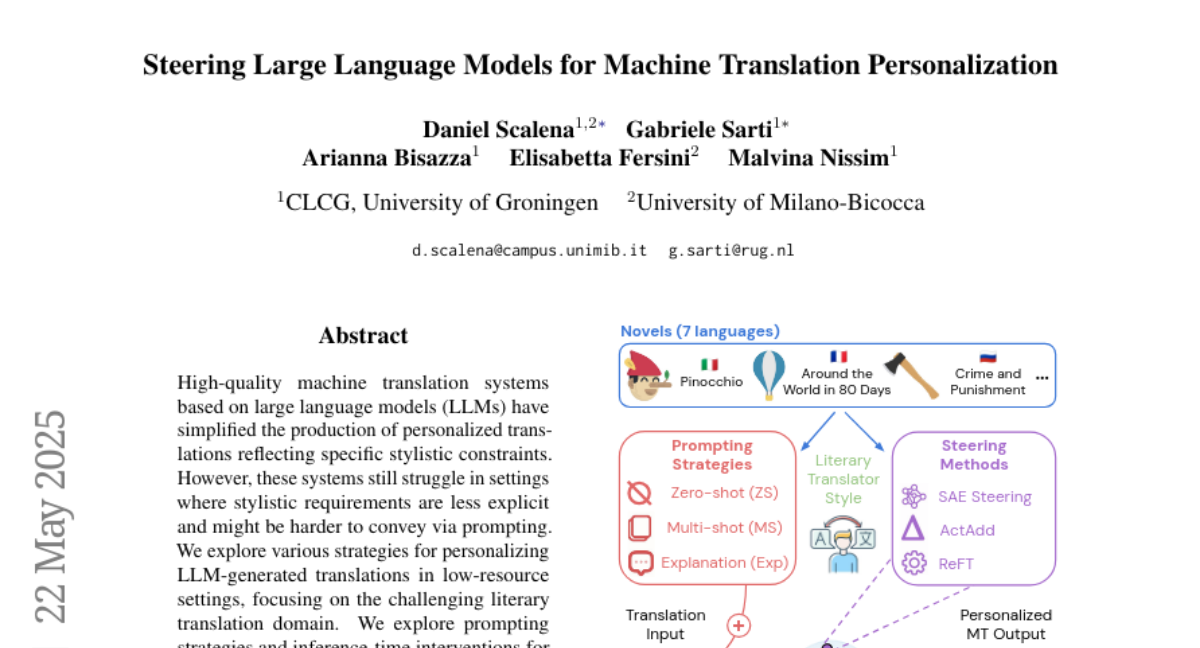

The researchers used special techniques like prompting and a contrastive framework that takes hidden ideas from sparse autoencoders. These methods help the language model learn how to personalize translations for different users while still keeping the translations accurate and high-quality.

Why it matters?

This is important because it means people can get translations that feel more natural and personal, even for less common languages or when there's not much information to work with, making translation tools more useful for everyone.

Abstract

Strategies including prompting and contrastive frameworks using latent concepts from sparse autoencoders effectively personalize LLM translations in low-resource settings while maintaining quality.