STR-Match: Matching SpatioTemporal Relevance Score for Training-Free Video Editing

Junsung Lee, Junoh Kang, Bohyung Han

2025-07-03

Summary

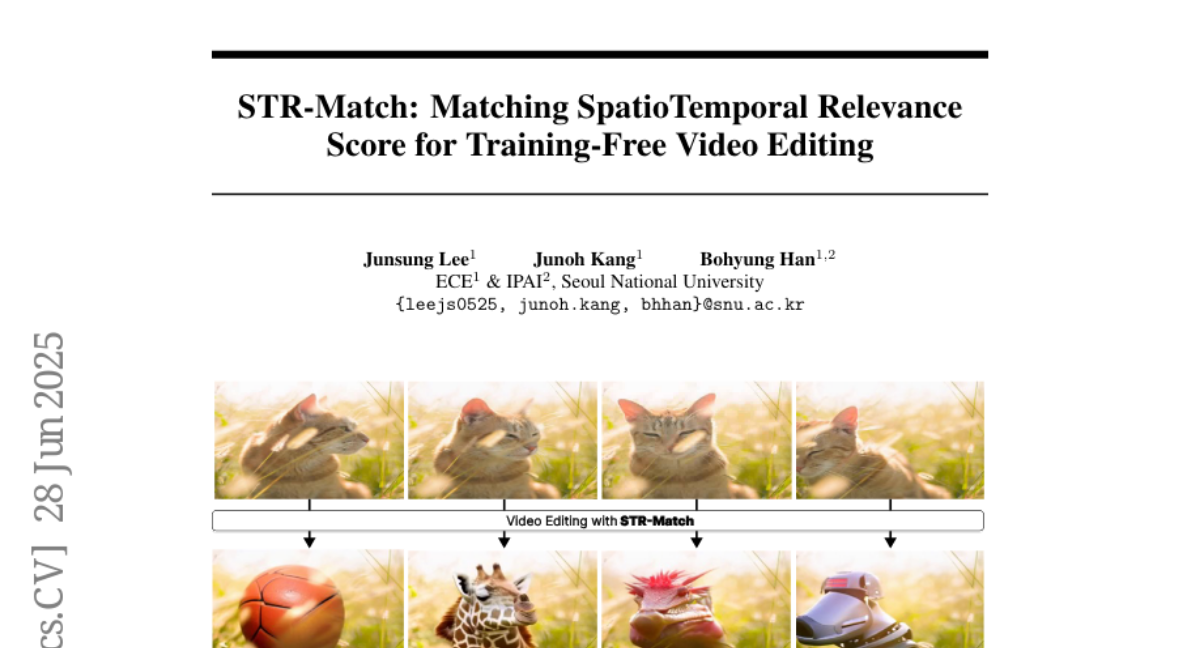

This paper talks about STR-Match, a new training-free video editing technique that improves how videos are edited by making sure the changes are smooth over time and visually consistent. It uses a special score to measure how important each pixel is across frames using both spatial and temporal attention from text-to-video diffusion models.

What's the problem?

The problem is that many current video editing methods cause flickering, motion distortions, and mismatched textures between foreground and background. They also struggle to keep changes consistent throughout the video, making the edited videos look unnatural.

What's the solution?

The researchers created STR-Match, which calculates a spatiotemporal relevance score combining 2D spatial and 1D temporal attention maps to guide the editing process. This score helps the algorithm optimize the video generation to keep attributes like motion, shape, and background consistent while allowing flexible edits.

Why it matters?

This matters because STR-Match allows for high-quality, realistic video edits without needing to train new models, which saves time and resources. It can be used for various video editing tasks, helping improve the visual experience and making video creation easier and more efficient.

Abstract

STR-Match, a training-free video editing algorithm, enhances spatiotemporal coherence in videos using latent optimization guided by a novel STR score, which leverages 2D spatial and 1D temporal attention in T2V diffusion models.