Streamline Without Sacrifice - Squeeze out Computation Redundancy in LMM

Penghao Wu, Lewei Lu, Ziwei Liu

2025-05-22

Summary

This paper talks about ProxyV, a new technique that helps big AI models that handle both images and text work faster and use less computing power, all without making their results any worse.

What's the problem?

Large multimodal models, which can understand both pictures and words, usually require a lot of computer resources and time to process information, making them slow and expensive to use.

What's the solution?

The researchers introduced proxy vision tokens, which are a smart shortcut that lets the model skip over unnecessary calculations, so it can get the same results much more efficiently.

Why it matters?

This matters because it means we can use powerful AI models in more places, like on regular computers or phones, making advanced technology more accessible and practical for everyone.

Abstract

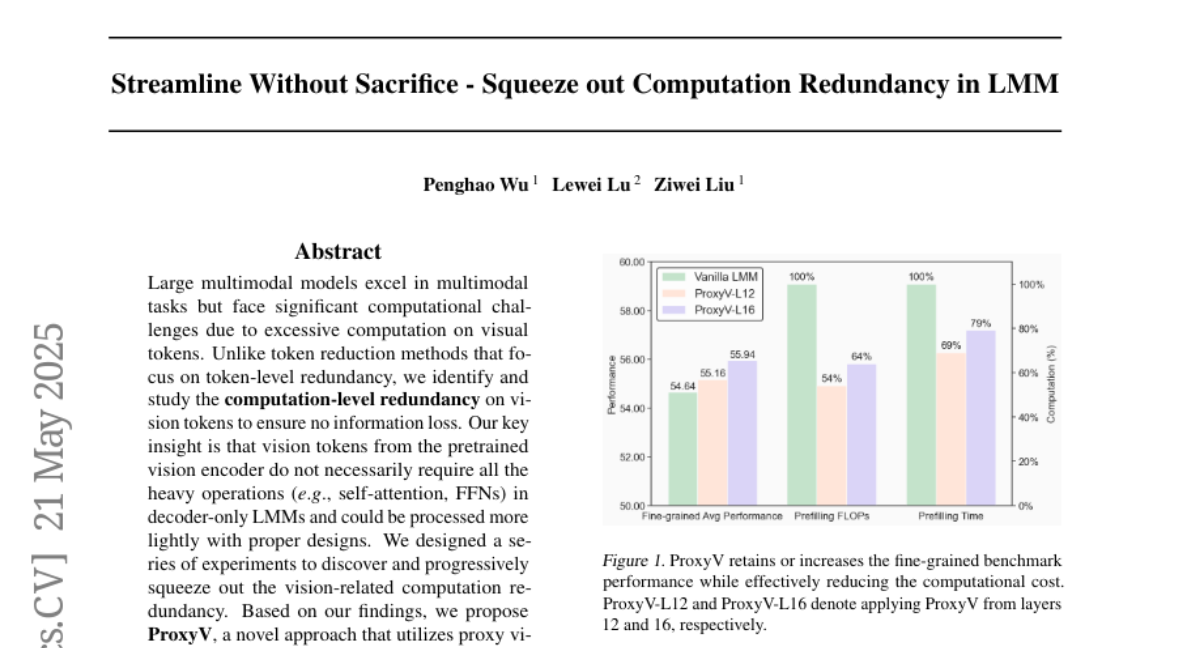

ProxyV alleviates computational burdens in large multimodal models by using proxy vision tokens, enhancing efficiency without sacrificing performance.