StructEval: Deepen and Broaden Large Language Model Assessment via Structured Evaluation

Boxi Cao, Mengjie Ren, Hongyu Lin, Xianpei Han, Feng Zhang, Junfeng Zhan, Le Sun

2024-08-07

Summary

This paper discusses a new method called SENSE that combines data from both strong and weak large language models (LLMs) to improve the ability to convert natural language into SQL queries.

What's the problem?

There is a significant performance gap between powerful closed-source LLMs, like GPT-4, and open-source models when it comes to converting everyday language into SQL, which is a structured query language used for managing databases. This gap makes it difficult for users who rely on open-source tools to achieve the same level of effectiveness as those using more advanced proprietary models.

What's the solution?

The authors propose a synthetic data approach that merges high-quality data generated by strong models with error data from weaker models. This combination helps the model learn better and generalize across different types of queries. They developed SENSE, a specialized text-to-SQL model that uses this synthetic data for training. The results show that SENSE performs exceptionally well on established benchmarks like SPIDER and BIRD, significantly narrowing the performance gap between open-source and closed-source models.

Why it matters?

This research is important because it enhances the capabilities of open-source LLMs, making them more competitive with powerful closed-source alternatives. By improving text-to-SQL conversion, this work allows more people to interact with databases using natural language, democratizing access to data analysis and enabling non-experts to utilize these tools effectively.

Abstract

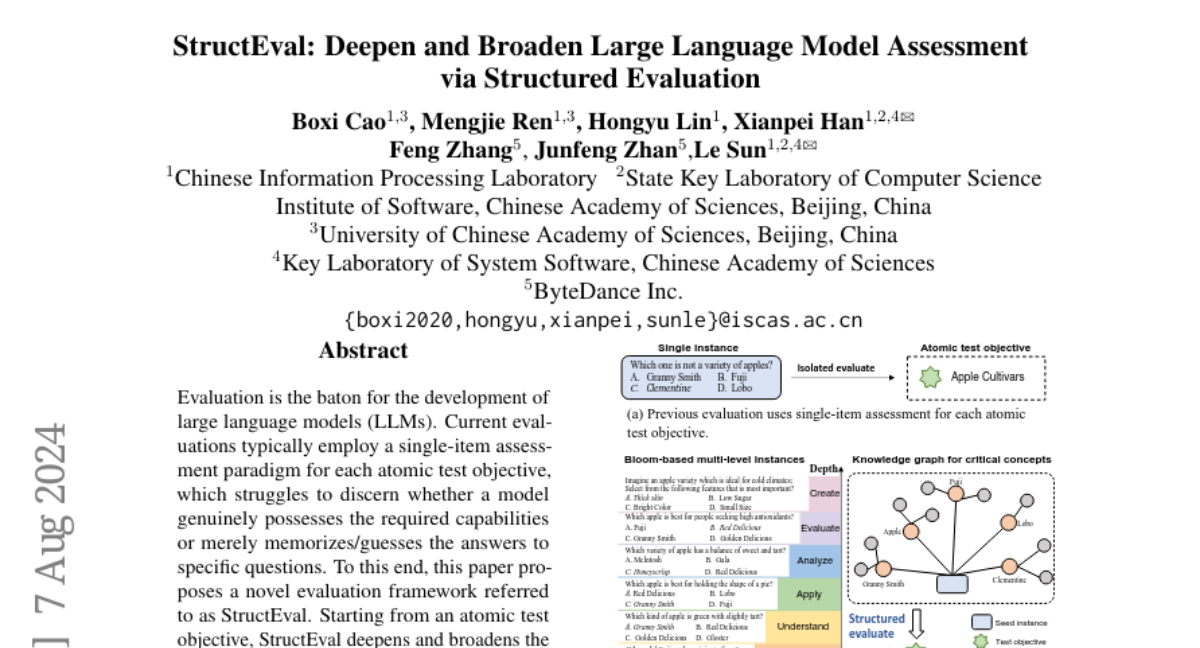

Evaluation is the baton for the development of large language models. Current evaluations typically employ a single-item assessment paradigm for each atomic test objective, which struggles to discern whether a model genuinely possesses the required capabilities or merely memorizes/guesses the answers to specific questions. To this end, we propose a novel evaluation framework referred to as StructEval. Starting from an atomic test objective, StructEval deepens and broadens the evaluation by conducting a structured assessment across multiple cognitive levels and critical concepts, and therefore offers a comprehensive, robust and consistent evaluation for LLMs. Experiments on three widely-used benchmarks demonstrate that StructEval serves as a reliable tool for resisting the risk of data contamination and reducing the interference of potential biases, thereby providing more reliable and consistent conclusions regarding model capabilities. Our framework also sheds light on the design of future principled and trustworthy LLM evaluation protocols.