Style Customization of Text-to-Vector Generation with Image Diffusion Priors

Peiying Zhang, Nanxuan Zhao, Jing Liao

2025-05-16

Summary

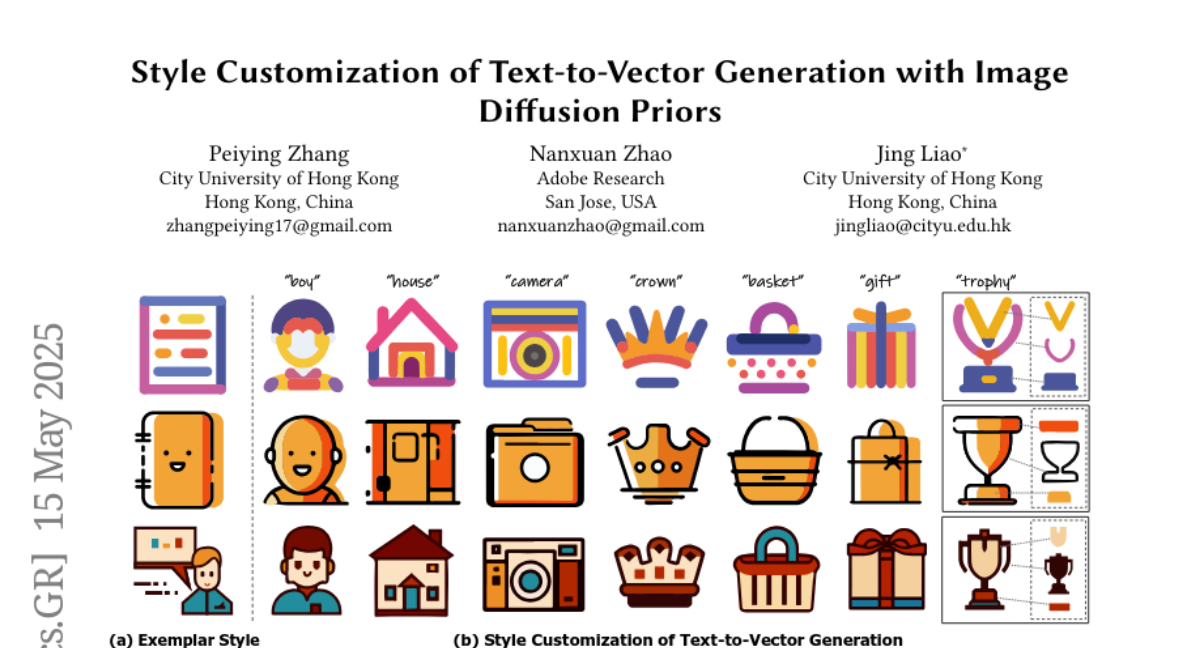

This paper talks about a new method for creating vector images, like SVGs, from text descriptions, where you can also choose and customize the style of the images you get.

What's the problem?

The problem is that while AI can turn text into images, it's much harder to create high-quality vector graphics that match a specific style, and most current methods don't give users much control over how the final images look.

What's the solution?

The researchers designed a two-step process using a special AI model that first learns from how images are created and then uses that knowledge to generate vector graphics from text, letting users pick and customize the style they want. This approach uses ideas from both text-to-image and text-to-vector models to get the best results.

Why it matters?

This matters because it makes it a lot easier for people to create unique, high-quality vector images for things like logos, digital art, or web design, just by describing what they want and picking a style, saving time and opening up creative possibilities.

Abstract

A two-stage pipeline using a T2V diffusion model with path-level representation and distillation of customized T2I models achieves high-quality and diverse SVG generation with style customization.