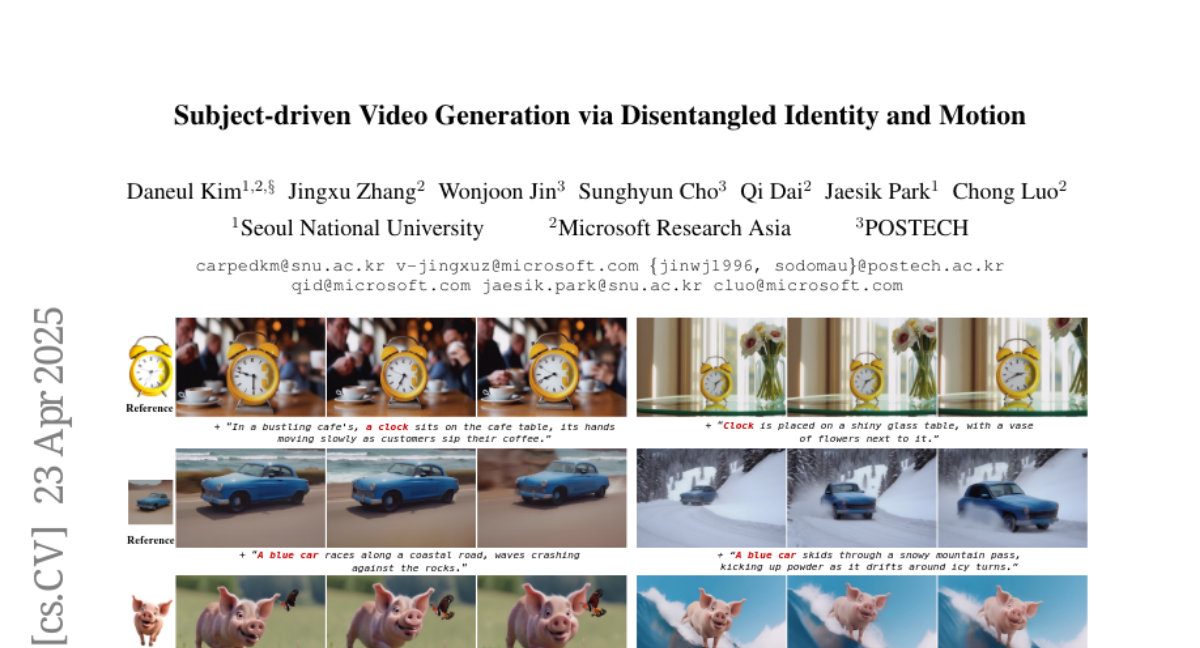

Subject-driven Video Generation via Disentangled Identity and Motion

Daneul Kim, Jingxu Zhang, Wonjoon Jin, Sunghyun Cho, Qi Dai, Jaesik Park, Chong Luo

2025-04-28

Summary

This paper talks about a new way to create custom videos where you can control who appears in the video and how they move, even if the AI has never seen that exact person or motion before.

What's the problem?

The problem is that most video generation methods have trouble making videos that both look like a specific person and show them moving naturally, especially if the AI hasn't been specially trained on lots of videos of that person.

What's the solution?

The researchers figured out how to separate, or 'disentangle,' the identity of the subject (like their face or style) from how they move in a video. They used image datasets to teach the AI about different people and added randomization tricks to help the model handle new motions, so it can mix and match identities and movements without needing a lot of new training.

Why it matters?

This matters because it lets people easily create personalized videos for things like entertainment, advertising, or education, all while saving time and resources since the AI doesn't need tons of examples to work.

Abstract

A method for zero-shot video customization achieves strong performance by decoupling subject-specific learning from temporal dynamics using image customization datasets and randomization techniques.