SynthDetoxM: Modern LLMs are Few-Shot Parallel Detoxification Data Annotators

Daniil Moskovskiy, Nikita Sushko, Sergey Pletenev, Elena Tutubalina, Alexander Panchenko

2025-02-11

Summary

This paper talks about SynthDetoxM, a new method and dataset for improving how AI systems clean up toxic language across multiple languages. The researchers used AI models to create a large collection of 'detoxified' sentences in German, French, Spanish, and Russian.

What's the problem?

There aren't enough datasets that show how to change toxic text into non-toxic versions across different languages. This makes it hard to train AI models to clean up offensive language in multiple languages effectively.

What's the solution?

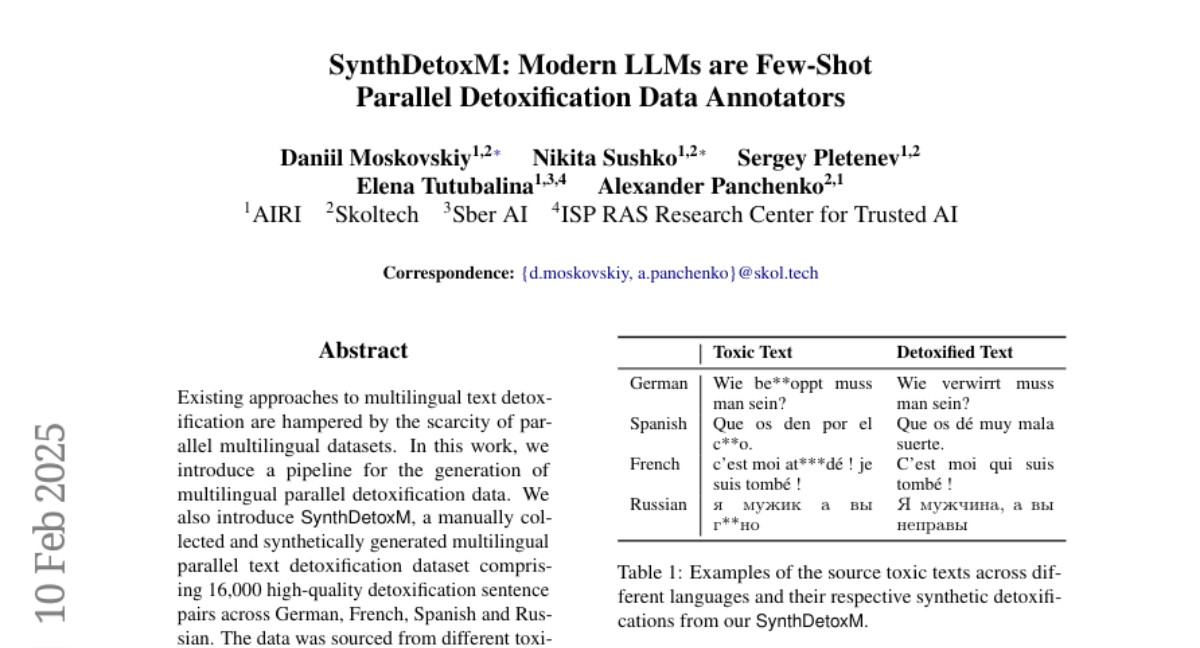

The researchers created SynthDetoxM, a dataset of 16,000 pairs of toxic and non-toxic sentences in four languages. They used existing toxic language datasets and then had nine different AI models rewrite the toxic sentences to make them non-toxic. This approach allowed them to create a large, high-quality dataset much faster than if humans had done all the work.

Why it matters?

This matters because it helps make the internet and online communication safer and more respectful for everyone, regardless of what language they speak. The SynthDetoxM dataset and method can help create better AI systems that can automatically detect and clean up toxic language in multiple languages, which is important for social media platforms, online forums, and other places where people from different cultures interact.

Abstract

Existing approaches to multilingual text detoxification are hampered by the scarcity of parallel multilingual datasets. In this work, we introduce a pipeline for the generation of multilingual parallel detoxification data. We also introduce SynthDetoxM, a manually collected and synthetically generated multilingual parallel text detoxification dataset comprising 16,000 high-quality detoxification sentence pairs across German, French, Spanish and Russian. The data was sourced from different toxicity evaluation datasets and then rewritten with nine modern open-source LLMs in few-shot setting. Our experiments demonstrate that models trained on the produced synthetic datasets have superior performance to those trained on the human-annotated MultiParaDetox dataset even in data limited setting. Models trained on SynthDetoxM outperform all evaluated LLMs in few-shot setting. We release our dataset and code to help further research in multilingual text detoxification.