Synthetic Video Enhances Physical Fidelity in Video Synthesis

Qi Zhao, Xingyu Ni, Ziyu Wang, Feng Cheng, Ziyan Yang, Lu Jiang, Bohan Wang

2025-03-28

Summary

This paper explores how to make AI-generated videos more realistic by using computer-generated videos that follow real-world physics as a guide.

What's the problem?

AI-generated videos often look fake because they don't always follow the laws of physics, like objects not being consistent in 3D or moving unnaturally.

What's the solution?

The researchers used computer-generated videos that obey the laws of physics to train the AI, which helped it create more realistic videos with fewer weird errors.

Why it matters?

This work matters because it's a step towards creating AI that can generate videos that are not only visually appealing but also physically believable, which could be useful for things like special effects and simulations.

Abstract

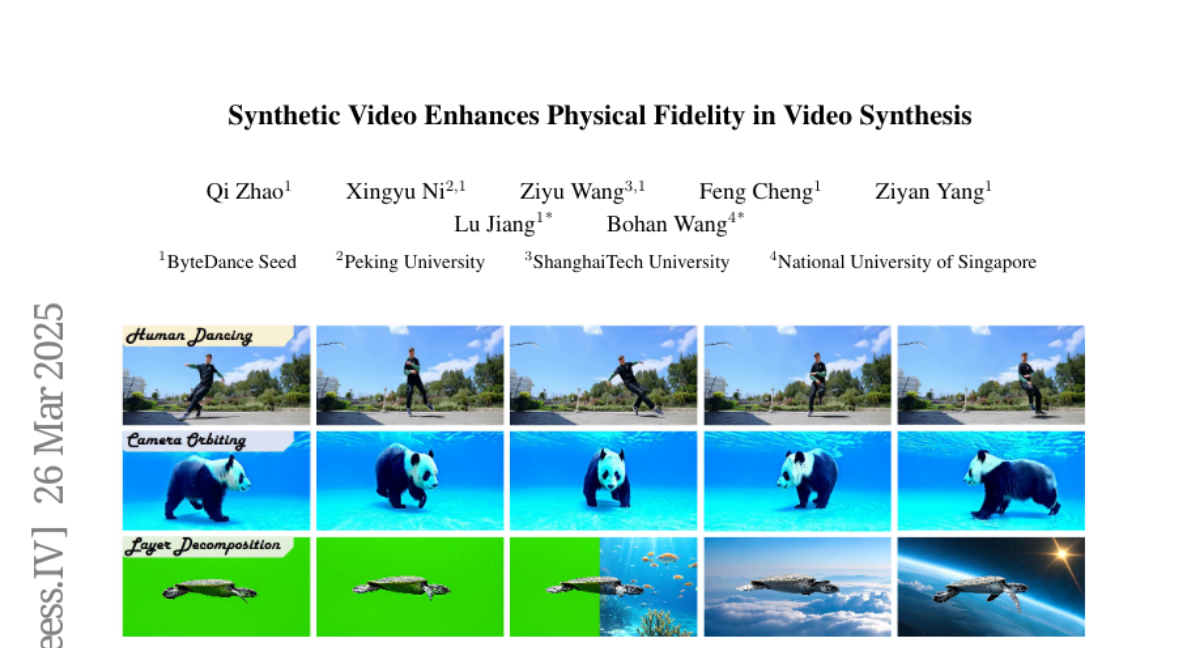

We investigate how to enhance the physical fidelity of video generation models by leveraging synthetic videos derived from computer graphics pipelines. These rendered videos respect real-world physics, such as maintaining 3D consistency, and serve as a valuable resource that can potentially improve video generation models. To harness this potential, we propose a solution that curates and integrates synthetic data while introducing a method to transfer its physical realism to the model, significantly reducing unwanted artifacts. Through experiments on three representative tasks emphasizing physical consistency, we demonstrate its efficacy in enhancing physical fidelity. While our model still lacks a deep understanding of physics, our work offers one of the first empirical demonstrations that synthetic video enhances physical fidelity in video synthesis. Website: https://kevinz8866.github.io/simulation/