SynWorld: Virtual Scenario Synthesis for Agentic Action Knowledge Refinement

Runnan Fang, Xiaobin Wang, Yuan Liang, Shuofei Qiao, Jialong Wu, Zekun Xi, Ningyu Zhang, Yong Jiang, Pengjun Xie, Fei Huang, Huajun Chen

2025-04-07

Summary

This paper talks about SynWorld, a system that helps AI agents learn how to handle new tasks by practicing in computer-generated scenarios, like a video game training ground for robots.

What's the problem?

AI struggles to adapt to new environments or complex tasks because it can’t practice real-world actions without expensive trial-and-error in physical settings.

What's the solution?

SynWorld creates virtual practice scenarios where AI can test multi-step actions, using a smart search method (MCTS) to learn the best strategies, like a chess player planning moves ahead.

Why it matters?

This lets AI learn faster and safer for real-world jobs like warehouse robots or customer service bots, reducing costly mistakes and improving problem-solving skills.

Abstract

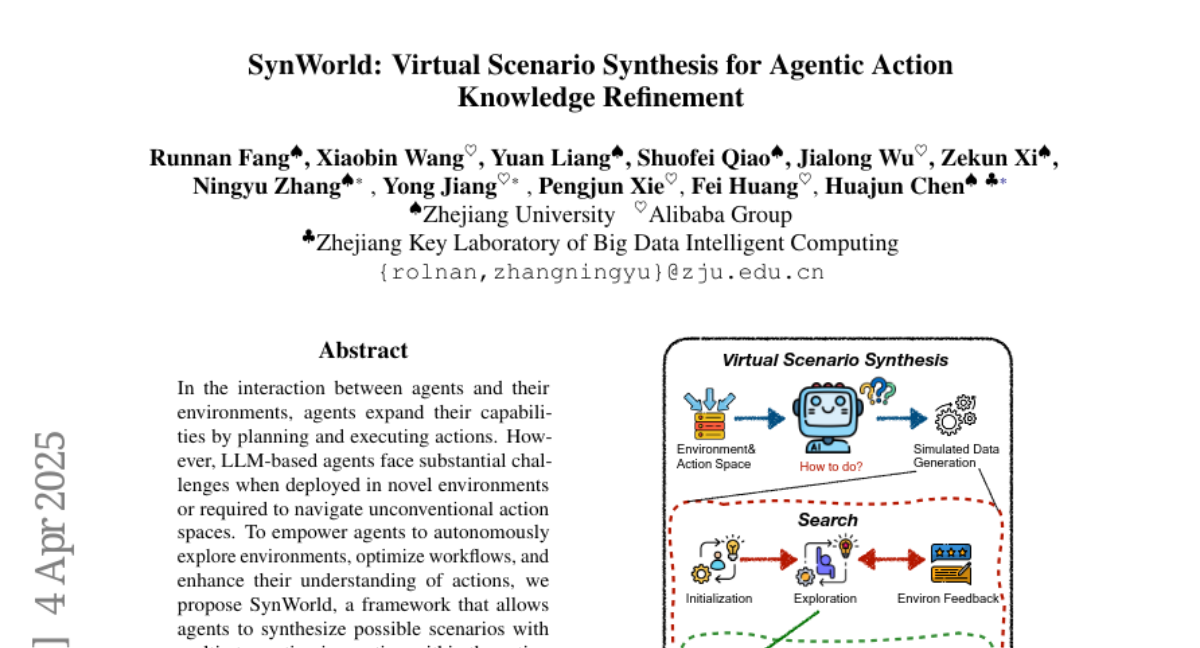

In the interaction between agents and their environments, agents expand their capabilities by planning and executing actions. However, LLM-based agents face substantial challenges when deployed in novel environments or required to navigate unconventional action spaces. To empower agents to autonomously explore environments, optimize workflows, and enhance their understanding of actions, we propose SynWorld, a framework that allows agents to synthesize possible scenarios with multi-step action invocation within the action space and perform Monte Carlo Tree Search (MCTS) exploration to effectively refine their action knowledge in the current environment. Our experiments demonstrate that SynWorld is an effective and general approach to learning action knowledge in new environments. Code is available at https://github.com/zjunlp/SynWorld.