Syzygy of Thoughts: Improving LLM CoT with the Minimal Free Resolution

Chenghao Li, Chaoning Zhang, Yi Lu, Jiaquan Zhang, Qigan Sun, Xudong Wang, Jiwei Wei, Guoqing Wang, Yang Yang, Heng Tao Shen

2025-04-17

Summary

This paper talks about Syzygy of Thoughts (SoT), a new way to help large language models think through problems more effectively by adding extra reasoning steps inspired by a math concept called Minimal Free Resolution.

What's the problem?

The problem is that even though large language models can solve lots of questions, they sometimes get stuck or make mistakes when the problem is complicated, because their reasoning can be too simple or direct.

What's the solution?

The researchers introduced SoT, which gives the model extra 'side paths' to reason through, based on ideas from algebra. These extra paths help the model check its work and find better solutions, making its problem-solving process more thorough and reliable.

Why it matters?

This matters because it makes AI models better at solving tough problems, which is important for things like math, science, and any situation where careful reasoning is needed. It helps the AI not just guess, but actually think things through more like a human would.

Abstract

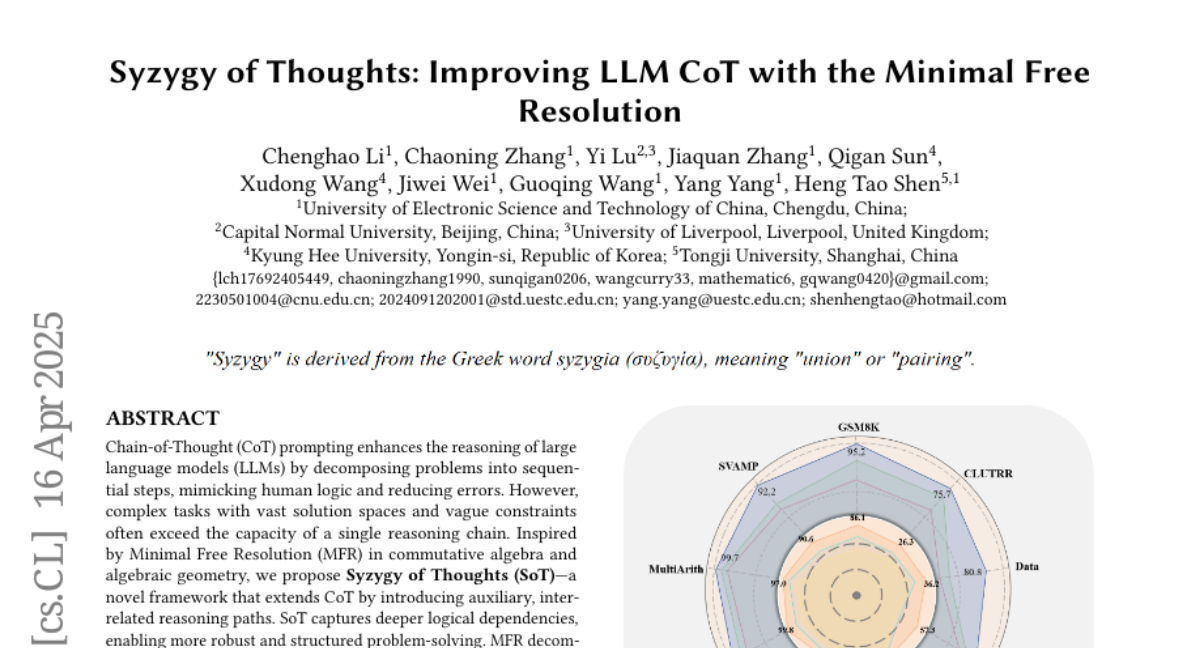

Syzygy of Thoughts (SoT) enhances large language models by introducing auxiliary reasoning paths inspired by Minimal Free Resolution from algebra, resulting in more robust problem-solving and improved performance.