Table-R1: Inference-Time Scaling for Table Reasoning

Zheyuan Yang, Lyuhao Chen, Arman Cohan, Yilun Zhao

2025-05-30

Summary

This paper talks about Table-R1, a new method that helps AI models reason about information in tables, like spreadsheets, more efficiently and with results as good as some of the most advanced models out there.

What's the problem?

The problem is that understanding and answering questions about tables usually requires really big and complex AI models, which can be slow and expensive to use. Smaller models often can't match the accuracy of the biggest ones, especially when it comes to tricky reasoning tasks.

What's the solution?

The researchers used two techniques, called distillation and RLVR, to train their model so it could do just as well as much larger models but with fewer resources. Their model, Table-R1-Zero, can handle table reasoning tasks quickly and accurately, even on new types of tables it hasn't seen before.

Why it matters?

This is important because it means more people and companies can use powerful AI for things like analyzing data and making decisions, without needing supercomputers. It also helps make AI technology more accessible and practical for everyday use.

Abstract

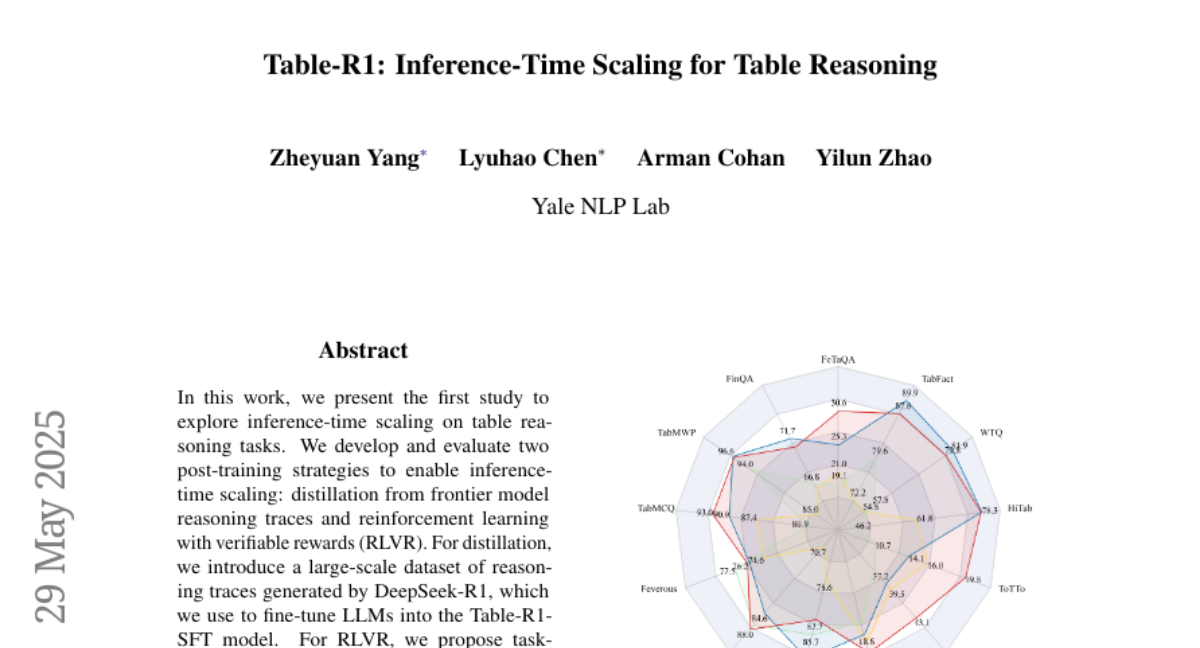

Two post-training strategies, distillation and RLVR, enable inference-time scaling in table reasoning tasks, resulting in a model (Table-R1-Zero) that matches GPT-4.1's performance using fewer parameters and shows strong generalization.