Taming generative video models for zero-shot optical flow extraction

Seungwoo Kim, Khai Loong Aw, Klemen Kotar, Cristobal Eyzaguirre, Wanhee Lee, Yunong Liu, Jared Watrous, Stefan Stojanov, Juan Carlos Niebles, Jiajun Wu, Daniel L. K. Yamins

2025-07-16

Summary

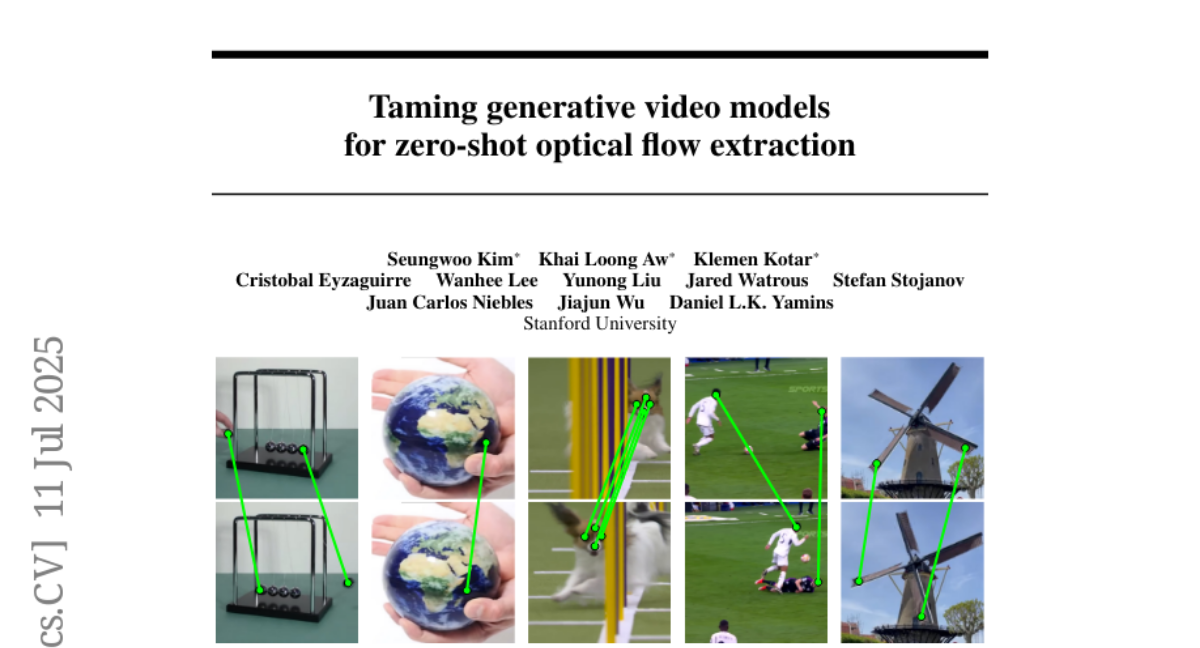

This paper talks about a new way to use generative video models to extract optical flow, which is the movement of objects between frames in a video, without needing to train the model specifically for this task.

What's the problem?

The problem is that detecting optical flow usually requires special models or additional training, which can be slow and complicated. Existing methods don’t always work well on real-world videos.

What's the solution?

The authors use a technique called counterfactual prompting with KL-tracing to guide the generative video model to extract optical flow directly at test time. This means the model can figure out how things are moving between frames just by analyzing the video itself, without extra training. Their method performs better than current state-of-the-art techniques on both real and synthetic video datasets.

Why it matters?

This matters because it makes extracting motion information from videos easier and more accurate without extra work. Optical flow is important for many applications, like video editing, robotics, and autonomous driving, so this method can improve the performance and efficiency of these systems.

Abstract

Counterfactual prompting of generative video models, using KL-tracing, extracts optical flow without fine-tuning, outperforming state-of-the-art methods on real and synthetic datasets.