Target-Aware Video Diffusion Models

Taeksoo Kim, Hanbyul Joo

2025-04-03

Summary

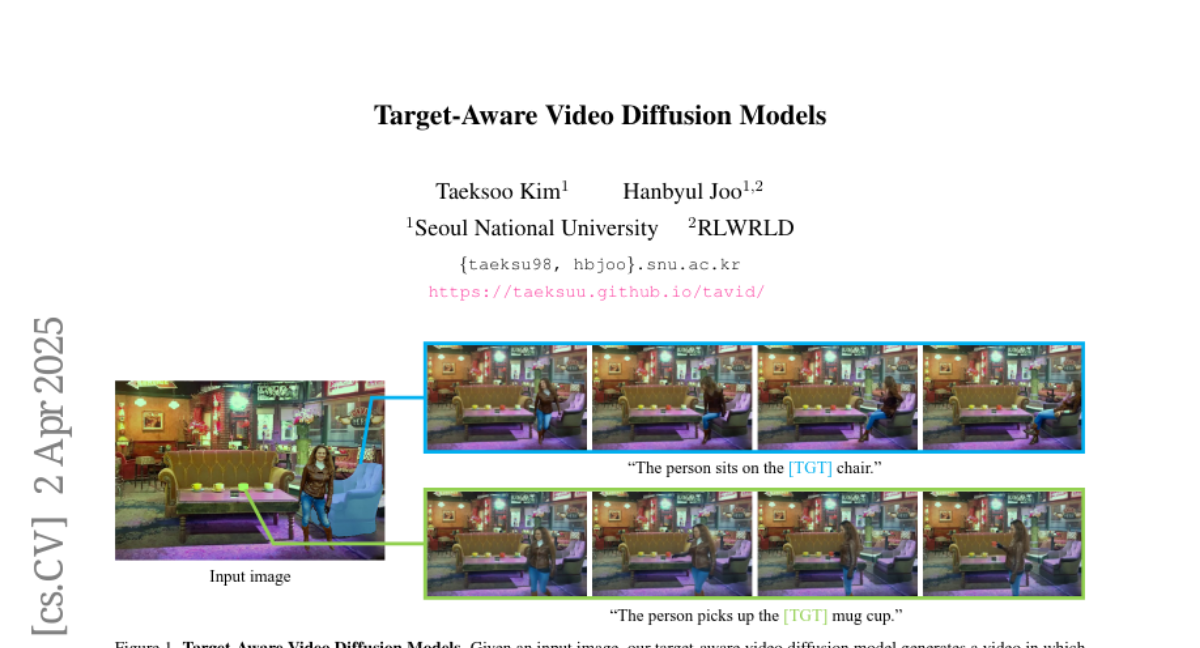

This paper is about creating an AI model that can generate videos of a person interacting with a specific object in a certain way, based on a simple description.

What's the problem?

Existing AI models struggle to create videos where a person interacts with a target object in a realistic and controlled way. They often need detailed instructions on how the person should move.

What's the solution?

The researchers created a new AI model that only needs a simple mask to identify the target object and a text prompt to describe the action. This allows the model to generate videos where the person interacts with the object in a natural and plausible way.

Why it matters?

This work matters because it can be used to create realistic videos for entertainment, robotics, and other applications where it's important to control how a person interacts with objects.

Abstract

We present a target-aware video diffusion model that generates videos from an input image in which an actor interacts with a specified target while performing a desired action. The target is defined by a segmentation mask and the desired action is described via a text prompt. Unlike existing controllable image-to-video diffusion models that often rely on dense structural or motion cues to guide the actor's movements toward the target, our target-aware model requires only a simple mask to indicate the target, leveraging the generalization capabilities of pretrained models to produce plausible actions. This makes our method particularly effective for human-object interaction (HOI) scenarios, where providing precise action guidance is challenging, and further enables the use of video diffusion models for high-level action planning in applications such as robotics. We build our target-aware model by extending a baseline model to incorporate the target mask as an additional input. To enforce target awareness, we introduce a special token that encodes the target's spatial information within the text prompt. We then fine-tune the model with our curated dataset using a novel cross-attention loss that aligns the cross-attention maps associated with this token with the input target mask. To further improve performance, we selectively apply this loss to the most semantically relevant transformer blocks and attention regions. Experimental results show that our target-aware model outperforms existing solutions in generating videos where actors interact accurately with the specified targets. We further demonstrate its efficacy in two downstream applications: video content creation and zero-shot 3D HOI motion synthesis.