Teaching with Lies: Curriculum DPO on Synthetic Negatives for Hallucination Detection

Shrey Pandit, Ashwin Vinod, Liu Leqi, Ying Ding

2025-05-26

Summary

This paper talks about a new way to train large language models to spot when they're making things up, also known as hallucinations, by teaching them with fake examples on purpose.

What's the problem?

The problem is that language models sometimes generate information that sounds real but is actually made up, and it can be hard for the models themselves to recognize when this happens.

What's the solution?

The researchers designed a training method where they feed the models carefully created fake statements, or synthetic negatives, as part of a learning curriculum. This helps the models get better at telling the difference between true and false information, making them more aware of their own mistakes.

Why it matters?

This is important because it helps make AI models more trustworthy and reliable, especially in situations where giving out wrong or made-up information could cause confusion or harm.

Abstract

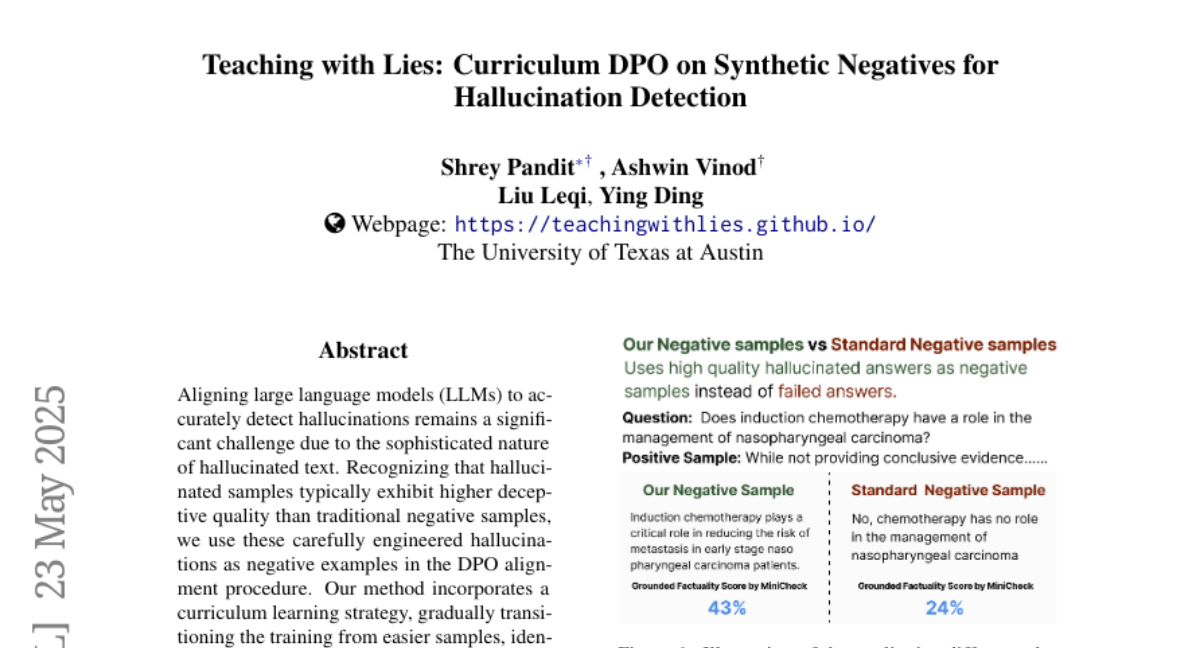

The use of carefully crafted hallucinations in a curriculum learning approach within the DPO alignment procedure significantly enhances LLMs' hallucination detection abilities.