Temporal Regularization Makes Your Video Generator Stronger

Harold Haodong Chen, Haojian Huang, Xianfeng Wu, Yexin Liu, Yajing Bai, Wen-Jie Shu, Harry Yang, Ser-Nam Lim

2025-03-20

Summary

This paper explores ways to improve video generation by focusing on the temporal quality of the generated videos.

What's the problem?

Generating videos with consistent motion and realistic dynamics across frames is difficult.

What's the solution?

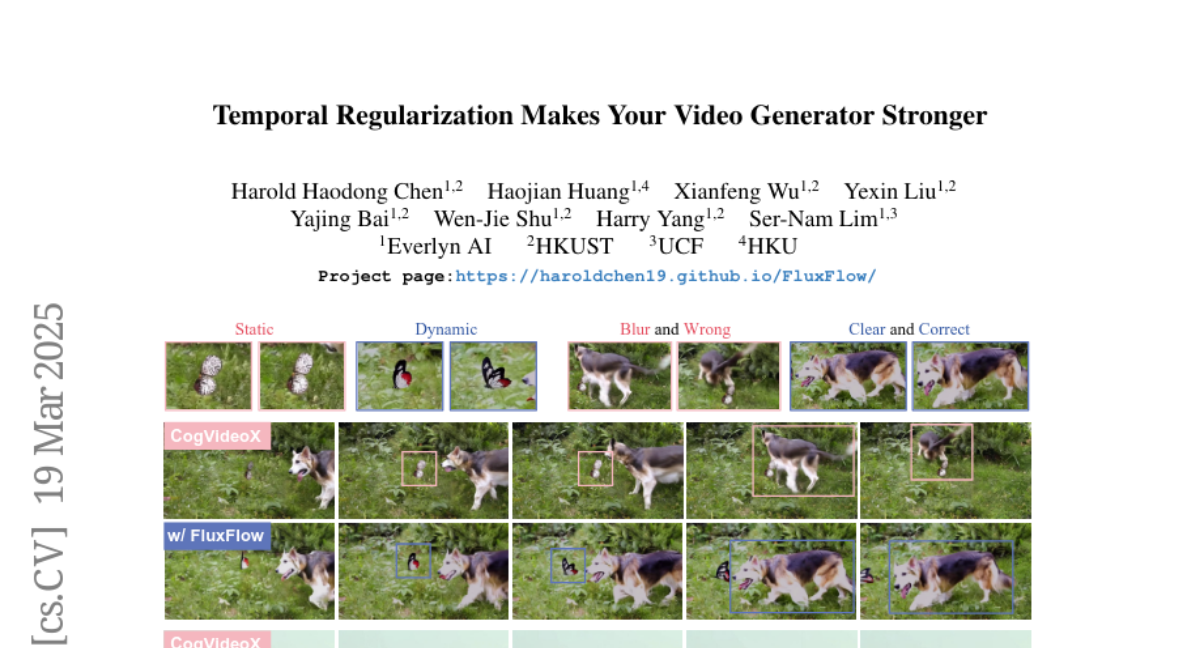

The researchers introduced a strategy called FluxFlow, which applies controlled temporal changes to the video data during training.

Why it matters?

This work matters because it demonstrates a simple yet effective way to improve the quality of generated videos.

Abstract

Temporal quality is a critical aspect of video generation, as it ensures consistent motion and realistic dynamics across frames. However, achieving high temporal coherence and diversity remains challenging. In this work, we explore temporal augmentation in video generation for the first time, and introduce FluxFlow for initial investigation, a strategy designed to enhance temporal quality. Operating at the data level, FluxFlow applies controlled temporal perturbations without requiring architectural modifications. Extensive experiments on UCF-101 and VBench benchmarks demonstrate that FluxFlow significantly improves temporal coherence and diversity across various video generation models, including U-Net, DiT, and AR-based architectures, while preserving spatial fidelity. These findings highlight the potential of temporal augmentation as a simple yet effective approach to advancing video generation quality.