TesserAct: Learning 4D Embodied World Models

Haoyu Zhen, Qiao Sun, Hongxin Zhang, Junyan Li, Siyuan Zhou, Yilun Du, Chuang Gan

2025-04-30

Summary

This paper talks about TesserAct, a new AI model that helps computers understand and predict how things move and change over time in 3D spaces, kind of like having a virtual robot that learns from videos.

What's the problem?

Most AI models struggle to fully understand both the shape of objects and how they move in the real world, especially when they have to figure out what happened before or predict what will happen next.

What's the solution?

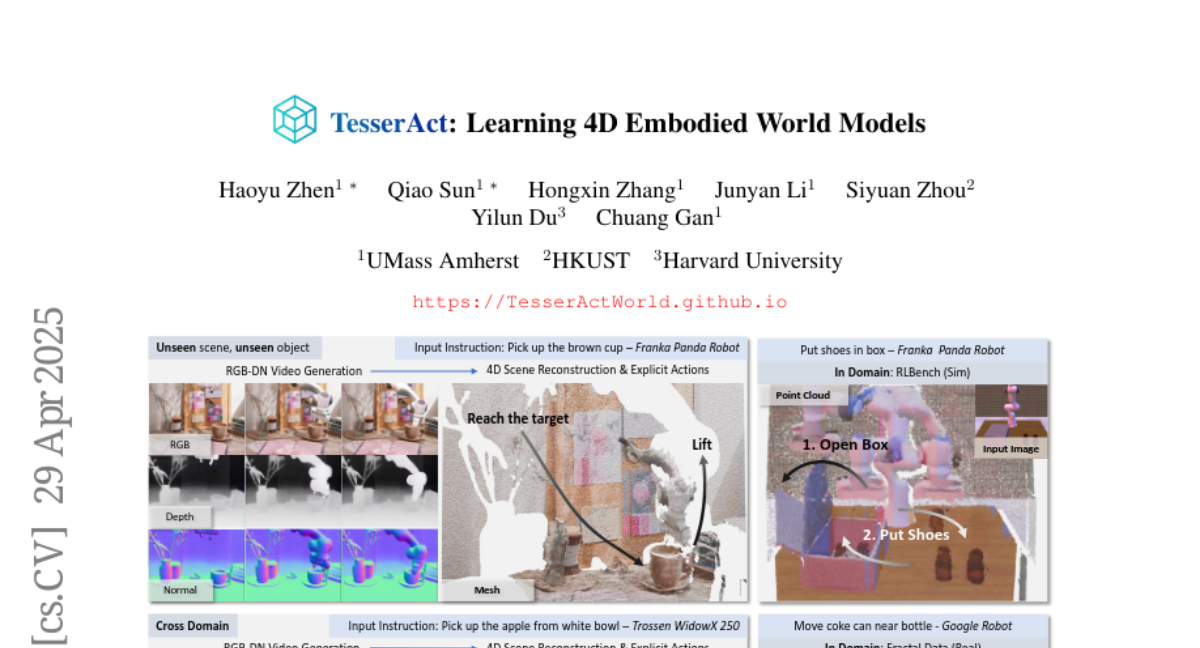

The researchers created a 4D world model by training it on special videos that include color, depth, and movement information. This helps the AI get much better at tasks like guessing how things will move, creating new views of a scene, and making smarter decisions in simulations.

Why it matters?

This matters because it could make robots and AI much better at understanding and interacting with the real world, which is important for things like self-driving cars, virtual reality, and advanced robotics.

Abstract

The paper introduces a 4D world model trained on RGB-DN videos to predict spatial and temporal dynamics in embodied environments, improving inverse dynamics learning, view synthesis, and policy performance.