TextCrafter: Accurately Rendering Multiple Texts in Complex Visual Scenes

Nikai Du, Zhennan Chen, Zhizhou Chen, Shan Gao, Xi Chen, Zhengkai Jiang, Jian Yang, Ying Tai

2025-04-01

Summary

This paper discusses a new method for AI to generate images with multiple texts in different places that are clear and readable.

What's the problem?

It's hard for AI to create images with lots of text that isn't blurry, distorted, or missing.

What's the solution?

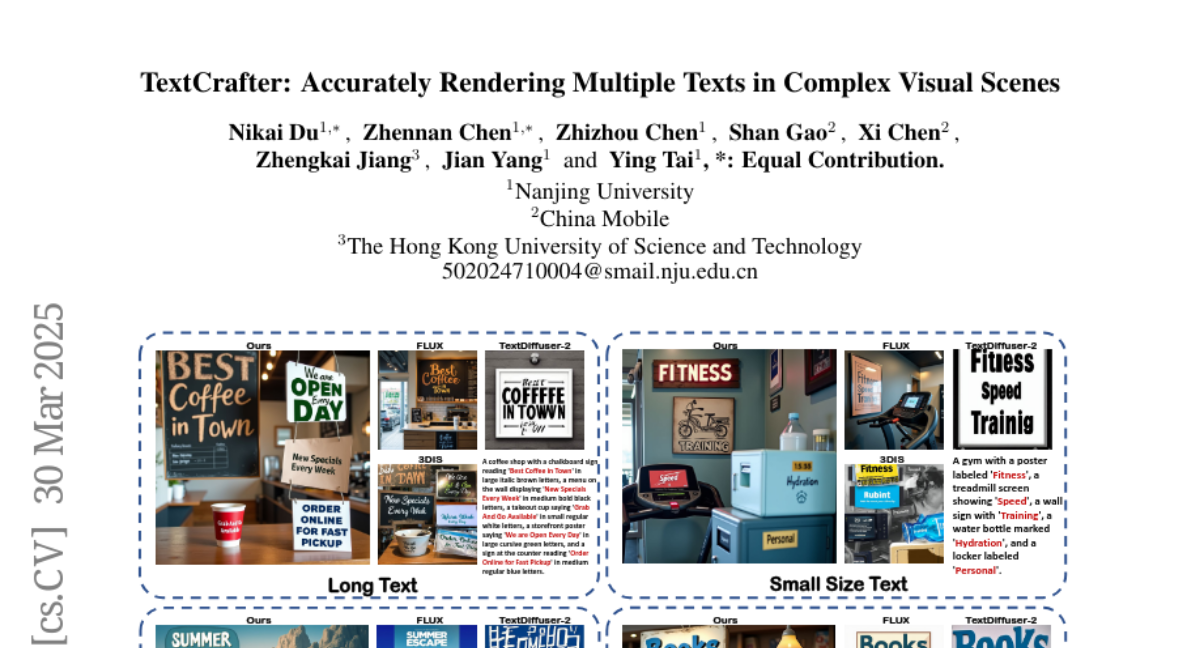

The researchers developed TextCrafter, which breaks down the process into smaller steps and focuses on making the text stand out.

Why it matters?

This work matters because it can improve AI's ability to create images with complex layouts and detailed text, which is useful for things like graphic design and advertising.

Abstract

This paper explores the task of Complex Visual Text Generation (CVTG), which centers on generating intricate textual content distributed across diverse regions within visual images. In CVTG, image generation models often rendering distorted and blurred visual text or missing some visual text. To tackle these challenges, we propose TextCrafter, a novel multi-visual text rendering method. TextCrafter employs a progressive strategy to decompose complex visual text into distinct components while ensuring robust alignment between textual content and its visual carrier. Additionally, it incorporates a token focus enhancement mechanism to amplify the prominence of visual text during the generation process. TextCrafter effectively addresses key challenges in CVTG tasks, such as text confusion, omissions, and blurriness. Moreover, we present a new benchmark dataset, CVTG-2K, tailored to rigorously evaluate the performance of generative models on CVTG tasks. Extensive experiments demonstrate that our method surpasses state-of-the-art approaches.