TextToon: Real-Time Text Toonify Head Avatar from Single Video

Luchuan Song, Lele Chen, Celong Liu, Pinxin Liu, Chenliang Xu

2024-10-10

Summary

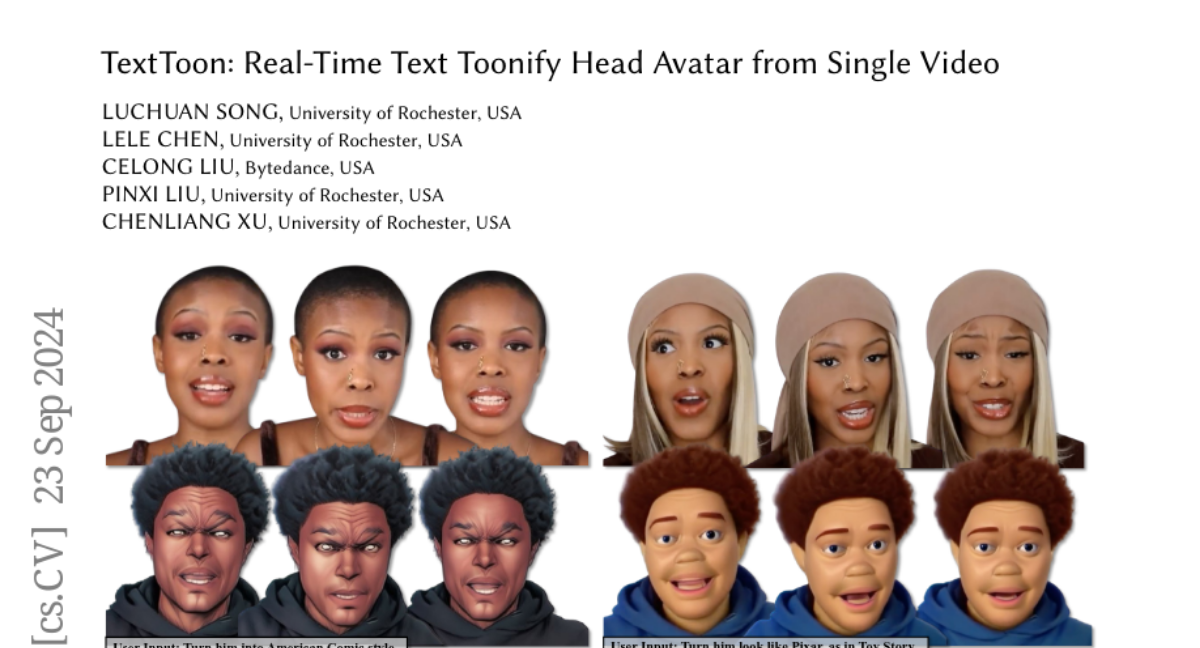

This paper introduces TextToon, a new method that creates a cartoon-style avatar from a single video of a person's face, allowing for real-time animation based on different video inputs.

What's the problem?

Existing methods for creating animated avatars often require multiple camera angles or complex setups, making them difficult to use in real-world applications. They also struggle with maintaining control over the avatar's appearance and expressions during animation, which limits their effectiveness for dynamic interactions.

What's the solution?

TextToon addresses these challenges by using a single video input and written style instructions to generate a high-quality toonified avatar. The system employs advanced techniques like a conditional embedding Tri-plane to learn realistic facial features and an adaptive pixel-translation neural network to enhance the avatar's stylization. This allows the avatar to be animated in real-time, achieving 48 frames per second on powerful machines and 15-18 frames per second on mobile devices.

Why it matters?

This research is important because it simplifies the process of creating animated avatars, making it accessible for various applications such as virtual communication, gaming, and entertainment. By allowing users to animate their avatars in real-time based on simple video inputs, TextToon opens up new possibilities for engaging digital experiences.

Abstract

We propose TextToon, a method to generate a drivable toonified avatar. Given a short monocular video sequence and a written instruction about the avatar style, our model can generate a high-fidelity toonified avatar that can be driven in real-time by another video with arbitrary identities. Existing related works heavily rely on multi-view modeling to recover geometry via texture embeddings, presented in a static manner, leading to control limitations. The multi-view video input also makes it difficult to deploy these models in real-world applications. To address these issues, we adopt a conditional embedding Tri-plane to learn realistic and stylized facial representations in a Gaussian deformation field. Additionally, we expand the stylization capabilities of 3D Gaussian Splatting by introducing an adaptive pixel-translation neural network and leveraging patch-aware contrastive learning to achieve high-quality images. To push our work into consumer applications, we develop a real-time system that can operate at 48 FPS on a GPU machine and 15-18 FPS on a mobile machine. Extensive experiments demonstrate the efficacy of our approach in generating textual avatars over existing methods in terms of quality and real-time animation. Please refer to our project page for more details: https://songluchuan.github.io/TextToon/.