The Diffusion Duality

Subham Sekhar Sahoo, Justin Deschenaux, Aaron Gokaslan, Guanghan Wang, Justin Chiu, Volodymyr Kuleshov

2025-06-16

Summary

This paper talks about Duo, a new method that improves a type of AI model called uniform-state discrete diffusion models by using ideas from Gaussian diffusion models. Duo helps these models train faster and generate text much more quickly, sometimes using only a few steps to produce high-quality results.

What's the problem?

The problem is that uniform-state discrete diffusion models, which can fix their own mistakes while generating text, usually don’t work as well or as fast as other popular models like autoregressive models. They lack some advanced training and sampling techniques that Gaussian diffusion models use, making them slower and less accurate.

What's the solution?

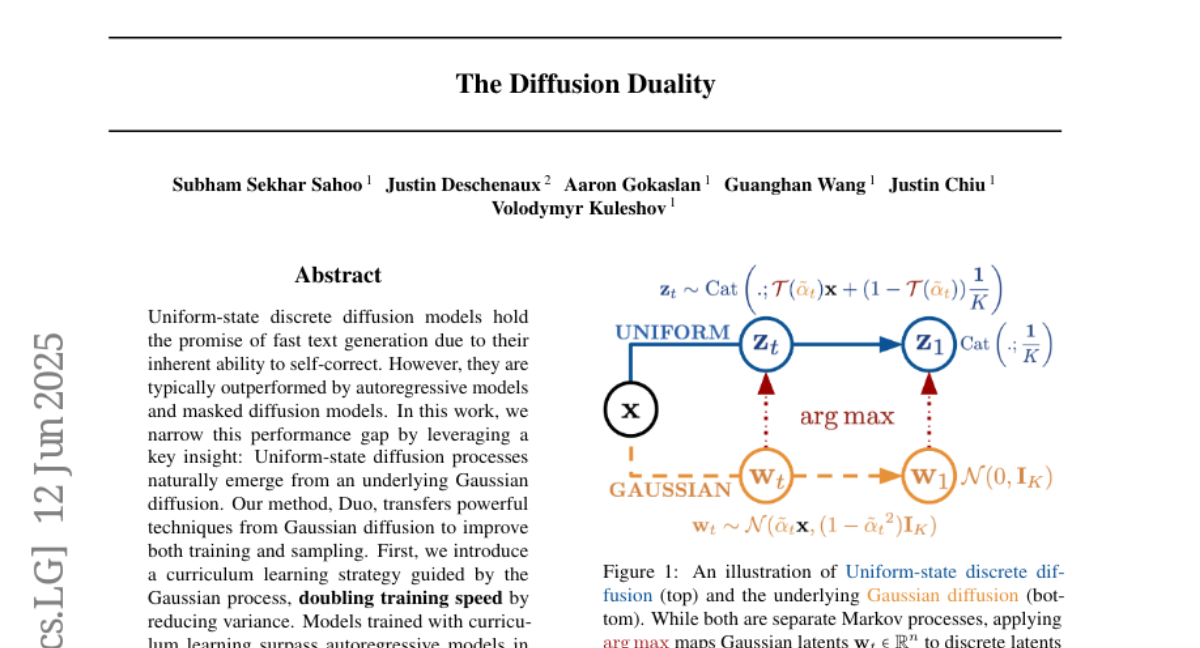

The solution is Duo, which finds a deep connection between discrete diffusion models and Gaussian diffusion models through a math operation called argmax. By using this connection, Duo transfers techniques from Gaussian diffusion, like a special way of teaching the model called curriculum learning, which doubles training speed. Duo also introduces a new method called Discrete Consistency Distillation that allows the model to generate text in very few steps, making text generation about 100 times faster.

Why it matters?

This matters because it makes diffusion-based AI models much faster and better at generating text, closing the gap with older models and even surpassing them on some tests. This could lead to AI systems that write or reply much quicker in real time, making chatbots and other language tools more useful and efficient.

Abstract

Duo improves uniform-state discrete diffusion models by transferring techniques from Gaussian diffusion, enhancing training speed and enabling fast few-step text generation.