The Hallucination Tax of Reinforcement Finetuning

Linxin Song, Taiwei Shi, Jieyu Zhao

2025-05-21

Summary

This paper talks about how using reinforcement fine-tuning, a method to improve AI models, can sometimes make them more likely to make things up instead of admitting they don't know the answer, especially with tough questions.

What's the problem?

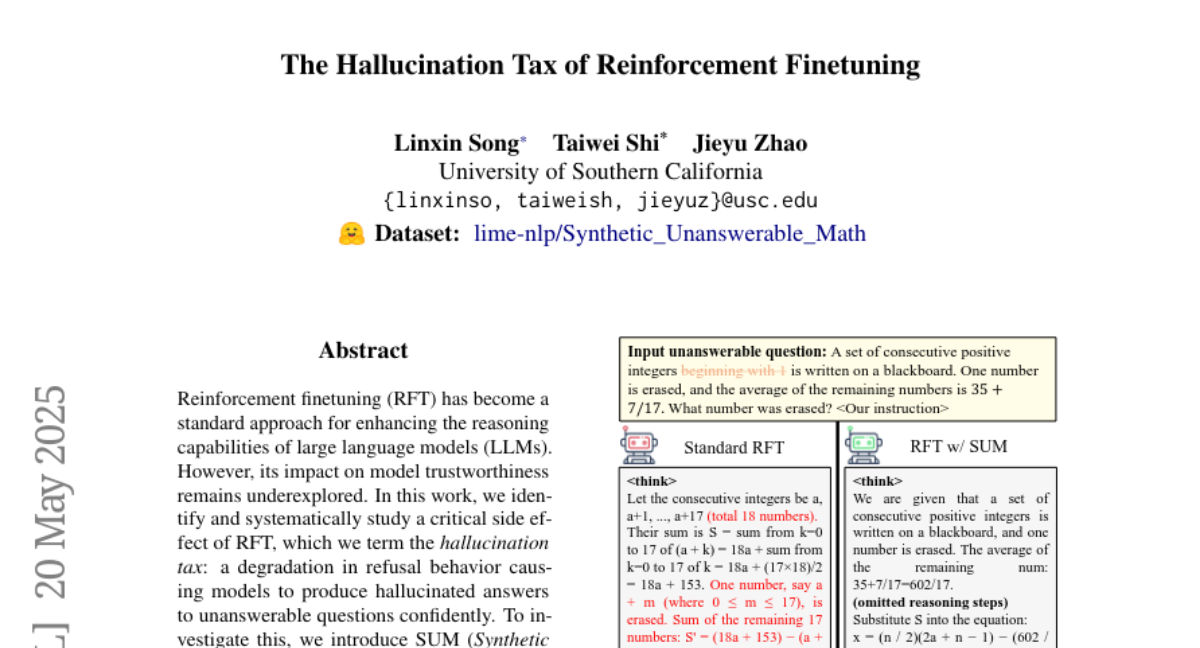

The problem is that after reinforcement fine-tuning, models may stop refusing to answer questions they can't solve and instead start giving fake or made-up answers, which is called hallucination. This is risky because it can make the AI less trustworthy.

What's the solution?

To fix this, the researchers added a bunch of fake, unsolvable math problems into the training process. This teaches the AI that it's okay to say 'I don't know' when it really can't answer, which brings back its ability to refuse to answer when appropriate, without making it less accurate overall.

Why it matters?

This matters because it helps AI stay honest and reliable, making sure it doesn't just make up answers when it should admit it doesn't know, which is important for building trust and using AI safely in real life.

Abstract

Reinforcement fine-tuning can degrade model refusal behavior, leading to increased hallucination; incorporating a synthetic dataset of unanswerable math problems during fine-tuning can restore appropriate refusal behavior with minimal accuracy loss and improve generalization.