TokenFlow: Unified Image Tokenizer for Multimodal Understanding and Generation

Liao Qu, Huichao Zhang, Yiheng Liu, Xu Wang, Yi Jiang, Yiming Gao, Hu Ye, Daniel K. Du, Zehuan Yuan, Xinglong Wu

2024-12-05

Summary

This paper introduces TokenFlow, a new image tokenizer that improves how AI understands and generates images by effectively combining different types of visual information.

What's the problem?

Traditional image tokenizers often struggle to balance the needs of understanding and generating images. They typically use a single method that doesn't work well for both tasks, leading to poor performance when trying to analyze images or create new ones. This creates a gap in how well AI can interpret and produce visual content.

What's the solution?

TokenFlow addresses this problem with a dual-codebook architecture that separates the learning of high-level semantic features (for understanding) from pixel-level details (for generation). This allows the model to access both types of information easily. By using a shared mapping mechanism, TokenFlow ensures that these two aspects remain aligned, resulting in better performance in both understanding and generating images. The researchers conducted extensive experiments showing that TokenFlow outperforms existing models, achieving significant improvements in various tasks.

Why it matters?

This research is important because it enhances the capabilities of AI in handling images, making it more effective for applications like image recognition, editing, and generation. By bridging the gap between understanding and generating images, TokenFlow can lead to advancements in fields such as digital art, virtual reality, and automated content creation, where high-quality visuals are essential.

Abstract

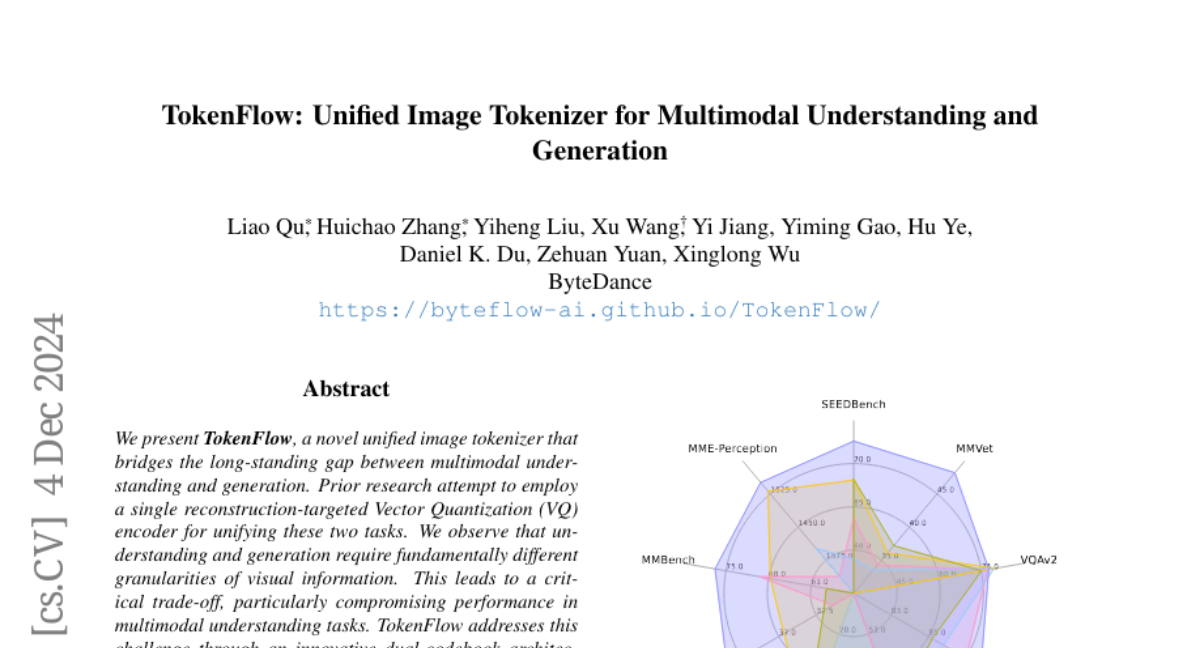

We present TokenFlow, a novel unified image tokenizer that bridges the long-standing gap between multimodal understanding and generation. Prior research attempt to employ a single reconstruction-targeted Vector Quantization (VQ) encoder for unifying these two tasks. We observe that understanding and generation require fundamentally different granularities of visual information. This leads to a critical trade-off, particularly compromising performance in multimodal understanding tasks. TokenFlow addresses this challenge through an innovative dual-codebook architecture that decouples semantic and pixel-level feature learning while maintaining their alignment via a shared mapping mechanism. This design enables direct access to both high-level semantic representations crucial for understanding tasks and fine-grained visual features essential for generation through shared indices. Our extensive experiments demonstrate TokenFlow's superiority across multiple dimensions. Leveraging TokenFlow, we demonstrate for the first time that discrete visual input can surpass LLaVA-1.5 13B in understanding performance, achieving a 7.2\% average improvement. For image reconstruction, we achieve a strong FID score of 0.63 at 384*384 resolution. Moreover, TokenFlow establishes state-of-the-art performance in autoregressive image generation with a GenEval score of 0.55 at 256*256 resolution, achieving comparable results to SDXL.