Tokenize Image as a Set

Zigang Geng, Mengde Xu, Han Hu, Shuyang Gu

2025-03-21

Summary

This paper presents a new method for AI to create images by thinking of the image as a collection of separate pieces, instead of one continuous thing.

What's the problem?

Traditional AI image generation methods can struggle to capture the complexity of images because they treat all parts of the image the same way.

What's the solution?

The researchers developed a way to break down images into unordered sets of tokens, allowing the AI to focus on the most important parts and understand the overall context better.

Why it matters?

This work matters because it can lead to AI that generates more realistic and detailed images by better understanding the different parts of an image and how they relate to each other.

Abstract

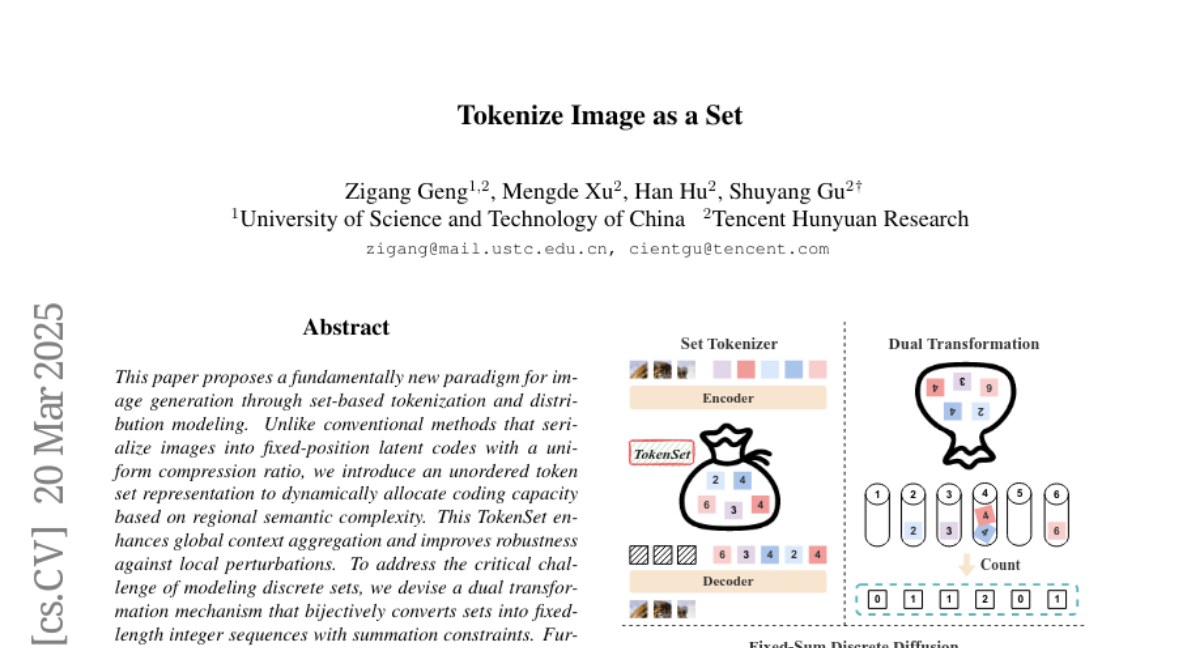

This paper proposes a fundamentally new paradigm for image generation through set-based tokenization and distribution modeling. Unlike conventional methods that serialize images into fixed-position latent codes with a uniform compression ratio, we introduce an unordered token set representation to dynamically allocate coding capacity based on regional semantic complexity. This TokenSet enhances global context aggregation and improves robustness against local perturbations. To address the critical challenge of modeling discrete sets, we devise a dual transformation mechanism that bijectively converts sets into fixed-length integer sequences with summation constraints. Further, we propose Fixed-Sum Discrete Diffusion--the first framework to simultaneously handle discrete values, fixed sequence length, and summation invariance--enabling effective set distribution modeling. Experiments demonstrate our method's superiority in semantic-aware representation and generation quality. Our innovations, spanning novel representation and modeling strategies, advance visual generation beyond traditional sequential token paradigms. Our code and models are publicly available at https://github.com/Gengzigang/TokenSet.