ToolRL: Reward is All Tool Learning Needs

Cheng Qian, Emre Can Acikgoz, Qi He, Hongru Wang, Xiusi Chen, Dilek Hakkani-Tür, Gokhan Tur, Heng Ji

2025-04-22

Summary

This paper talks about ToolRL, a new way to train language models to use tools more effectively by focusing on how rewards are given during reinforcement learning.

What's the problem?

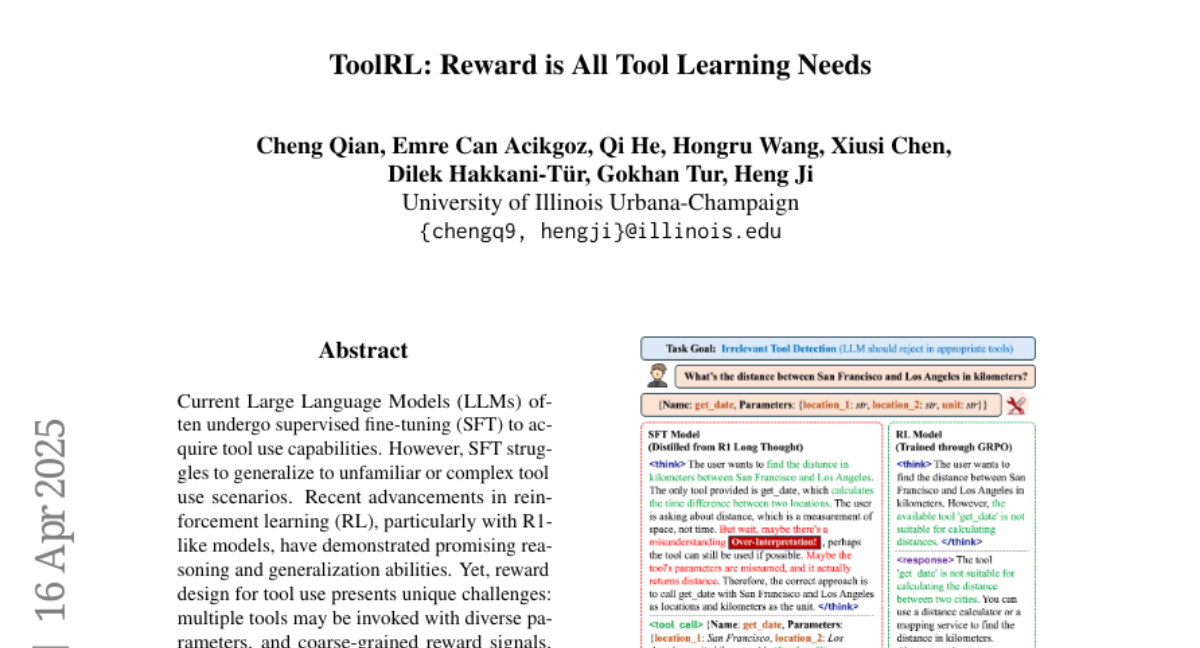

The problem is that language models usually learn to use tools through supervised fine-tuning, which doesn't help them handle new or complicated tool tasks very well. This is because the feedback they get is often too simple and doesn't guide them through the complex steps needed for real tool use.

What's the solution?

The researchers did a deep study on how to design rewards for tool use, testing different ways to give feedback about what the model does right or wrong. They created a special reward system that checks both if the model follows the right structure and if it uses tools correctly. They then used this system to train models with reinforcement learning, which helped the models learn to choose and use tools better, even in new situations.

Why it matters?

This matters because it makes language models much smarter and more flexible when using tools, which can help them solve a wider range of problems and work better in real-life applications where they need to interact with different systems or software.

Abstract

A comprehensive study on reward design for tool use within reinforcement learning improves LLMs' tool use capabilities and generalization performance over supervised fine-tuning.