Towards Dynamic Theory of Mind: Evaluating LLM Adaptation to Temporal Evolution of Human States

Yang Xiao, Jiashuo Wang, Qiancheng Xu, Changhe Song, Chunpu Xu, Yi Cheng, Wenjie Li, Pengfei Liu

2025-05-29

Summary

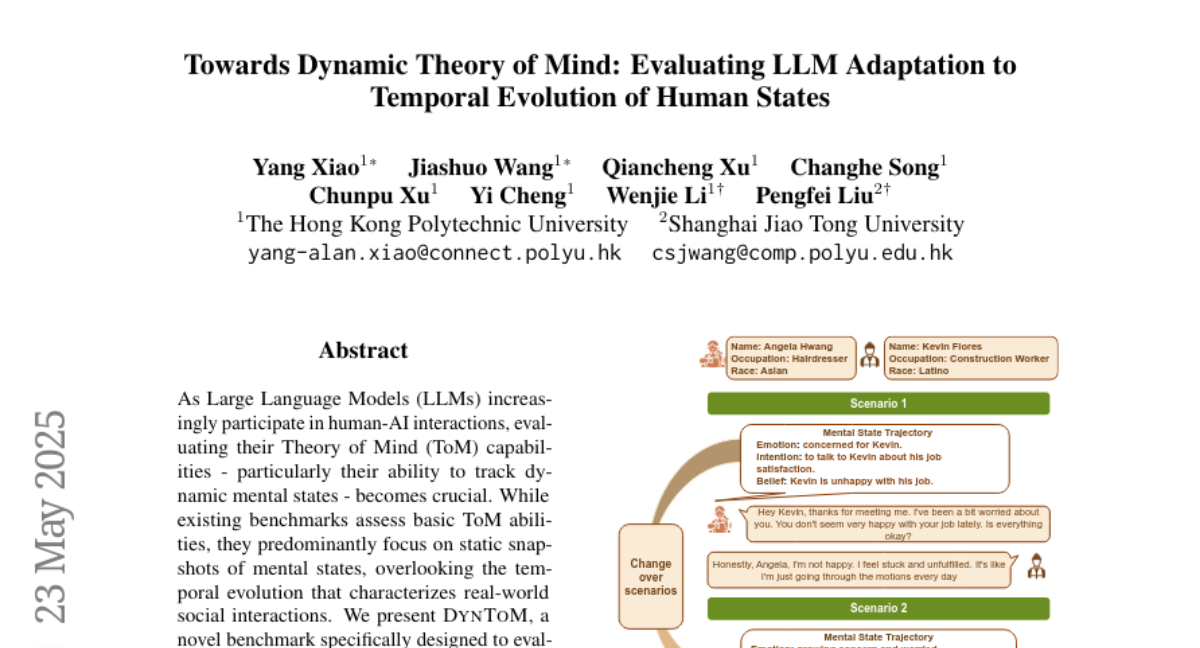

This paper talks about DynToM, a new test designed to see how well large language models (LLMs) can follow and understand how people's thoughts and feelings change over time in different social situations.

What's the problem?

The problem is that most current tests only check if AI can understand someone's mental state at a single moment, but in real life, people's thoughts and emotions evolve as situations change. Existing language models struggle to keep track of these changes, and their performance is much worse than humans when it comes to understanding how mental states shift across different scenarios.

What's the solution?

To address this, the researchers created DynToM, a large and realistic benchmark with thousands of social situations and questions. This benchmark specifically tests whether AI can track and reason about how mental states change over time, not just in isolated moments. They used this benchmark to evaluate several top language models and found that they still have a long way to go compared to humans.

Why it matters?

This is important because as AI becomes more involved in social and interactive settings, it needs to understand not just what people think or feel right now, but how those thoughts and feelings might change. DynToM helps researchers see where AI falls short and guides future improvements, making AI better at understanding and interacting with people.

Abstract

The DynToM benchmark evaluates LLMs' ability to track and understand the temporal progression of mental states, revealing significant gaps compared to human performance.