Towards Embodied Cognition in Robots via Spatially Grounded Synthetic Worlds

Joel Currie, Gioele Migno, Enrico Piacenti, Maria Elena Giannaccini, Patric Bach, Davide De Tommaso, Agnieszka Wykowska

2025-05-21

Summary

This paper talks about helping robots learn to understand and think about space and perspective, kind of like how humans do, by training them in detailed virtual worlds.

What's the problem?

Robots and AI often have trouble figuring out how to see things from different viewpoints or understand where objects are in space, which makes it hard for them to interact naturally with the real world.

What's the solution?

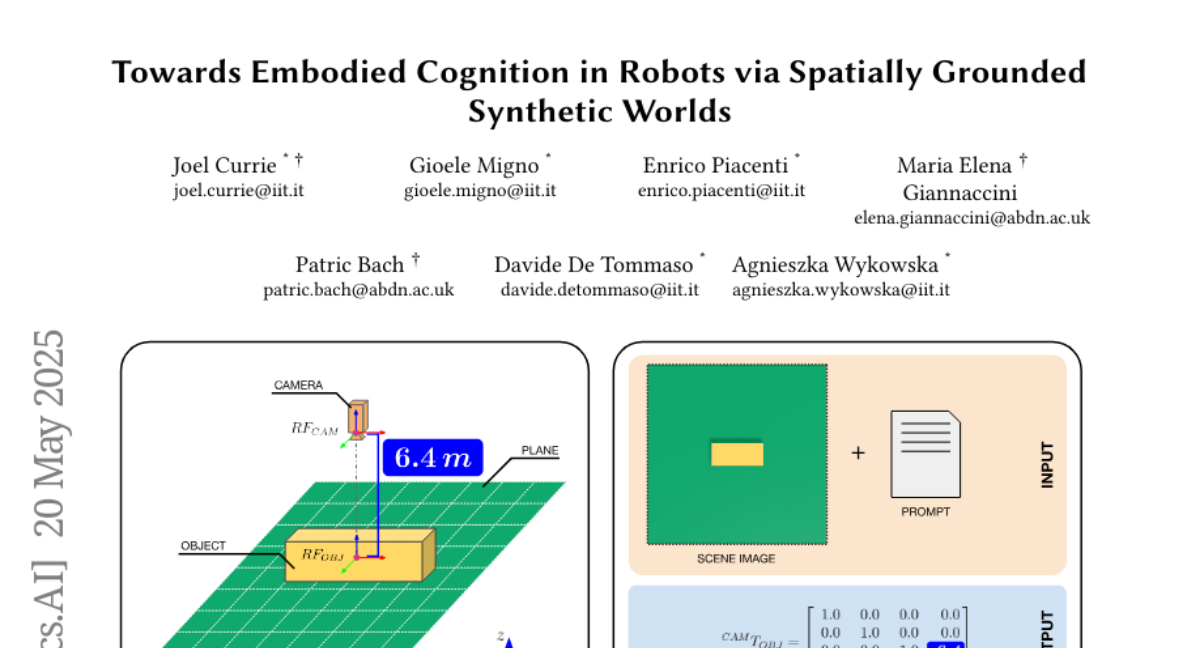

The researchers created a special virtual dataset using NVIDIA Omniverse, where robots can practice visual perspective tasks and spatial reasoning, helping them get better at understanding and reacting to their surroundings.

Why it matters?

This matters because it brings us closer to having robots that can work alongside people in the real world, doing things like helping at home, navigating new places, or assisting in emergencies.

Abstract

A synthetic dataset in NVIDIA Omniverse aids in training Vision-Language Models for Visual Perspective Taking by providing supervised learning for spatial reasoning tasks.