Towards Holistic Evaluation of Large Audio-Language Models: A Comprehensive Survey

Chih-Kai Yang, Neo S. Ho, Hung-yi Lee

2025-05-27

Summary

This paper talks about a survey that aims to create a complete and organized way to judge how well large audio-language models perform in different areas, like understanding sounds, reasoning, having conversations, and being fair.

What's the problem?

The problem is that current methods for testing these audio-language models are scattered and incomplete, making it hard to compare models or know which ones are actually good at handling real-world audio and language tasks.

What's the solution?

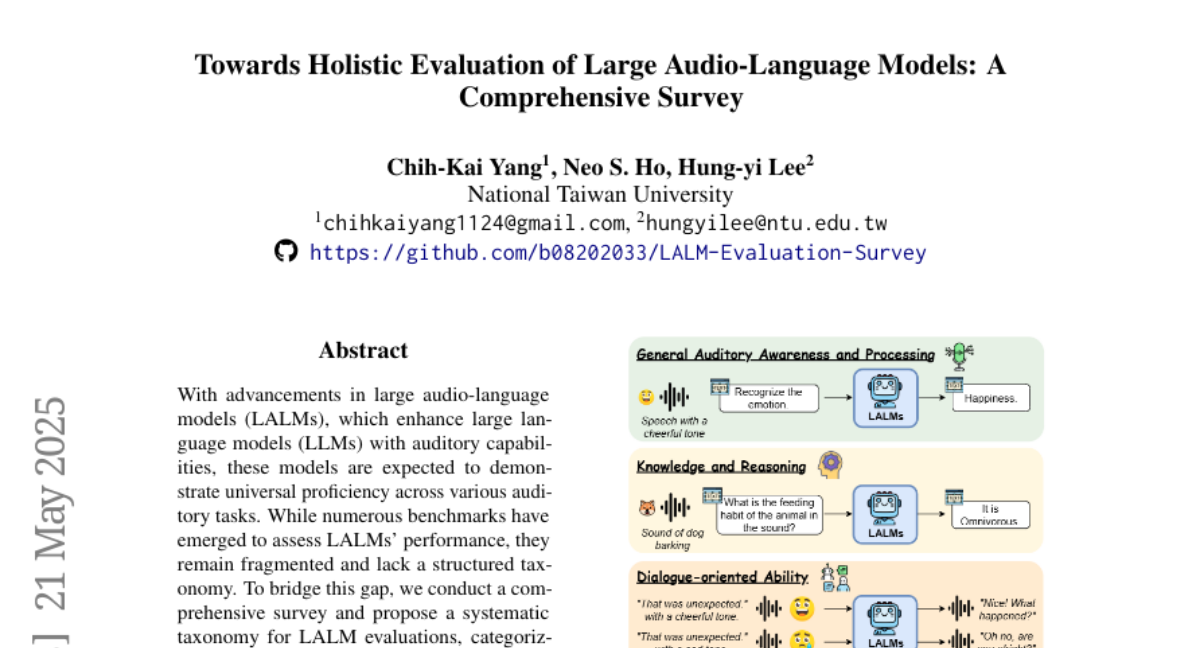

The authors suggest a new, systematic way to evaluate these models by breaking down their abilities into clear categories, such as how well they understand sounds, how they reason with information, how they handle conversations, and if they treat all users fairly. This approach helps bring together all the different ways of testing into one organized system.

Why it matters?

This is important because it helps researchers and developers know exactly how strong or weak a model is in each area, leading to better, more reliable, and fairer audio-language AI systems for everyone.

Abstract

A survey proposes a systematic taxonomy for evaluating large audio-language models across dimensions including auditory awareness, knowledge reasoning, dialogue ability, and fairness, to address fragmented benchmarks in the field.