Towards Learning to Complete Anything in Lidar

Ayca Takmaz, Cristiano Saltori, Neehar Peri, Tim Meinhardt, Riccardo de Lutio, Laura Leal-Taixé, Aljoša Ošep

2025-04-17

Summary

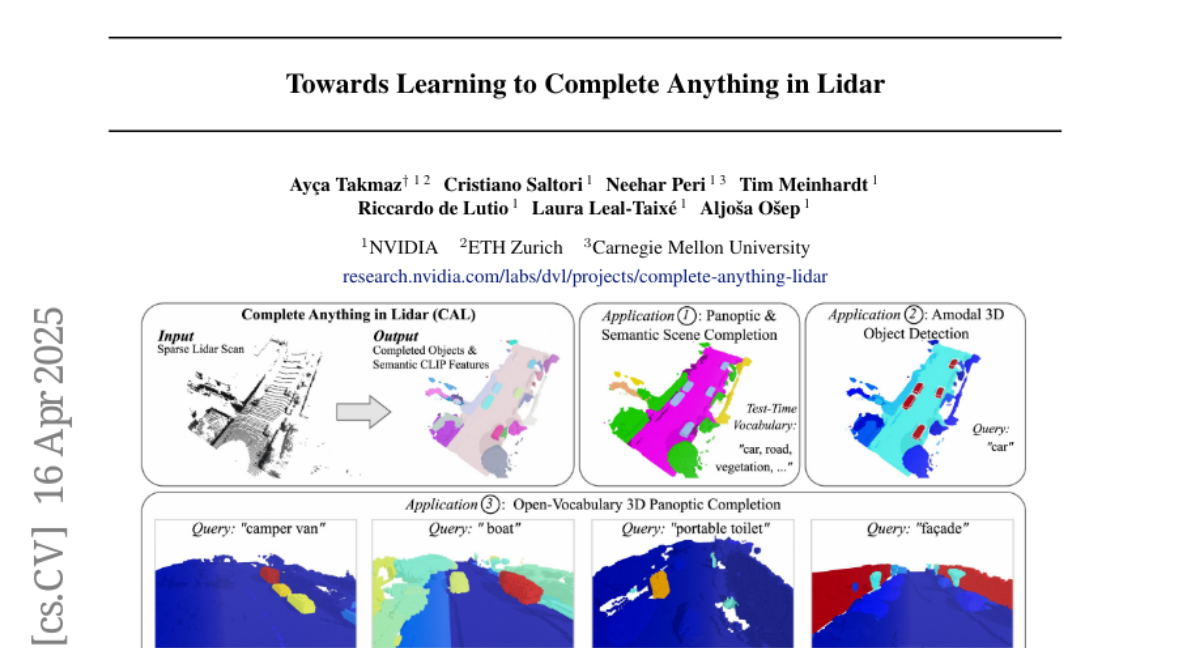

This paper talks about CAL, a new approach that helps AI systems use information from different types of sensors to fill in missing parts and recognize shapes in Lidar data, even if the objects aren’t from a set list of known categories.

What's the problem?

The problem is that most current AI models for Lidar data can only recognize or complete shapes if they already know what kind of object they’re looking at, like cars or trees. This limits their usefulness in real-world situations where they might encounter new or unusual objects.

What's the solution?

The researchers developed CAL, which uses sequences from multiple sensors, not just Lidar, to help the AI figure out missing shapes and identify objects it’s never seen before. This means the system can handle a much wider variety of situations and doesn’t need to be trained on every possible object type.

Why it matters?

This matters because it makes technologies like self-driving cars, robots, and drones much more flexible and reliable. They can better understand and react to their surroundings, even when they come across something unexpected.

Abstract

CAL uses multi-modal sensor sequences for zero-shot Lidar-based shape completion and recognition, extending beyond fixed class vocabularies.