Towards Understanding Camera Motions in Any Video

Zhiqiu Lin, Siyuan Cen, Daniel Jiang, Jay Karhade, Hewei Wang, Chancharik Mitra, Tiffany Ling, Yuhan Huang, Sifan Liu, Mingyu Chen, Rushikesh Zawar, Xue Bai, Yilun Du, Chuang Gan, Deva Ramanan

2025-04-28

Summary

This paper talks about CameraBench, a new tool and dataset that helps researchers measure and improve how well AI systems understand camera movements in any kind of video.

What's the problem?

The problem is that most AI models have a hard time figuring out exactly how a camera moves when filming a scene, especially since understanding both what’s in the scene (the meaning) and the actual motion (the geometry) is really important for things like robotics, filmmaking, and virtual reality. There hasn’t been a good way to test or compare how well different models do this.

What's the solution?

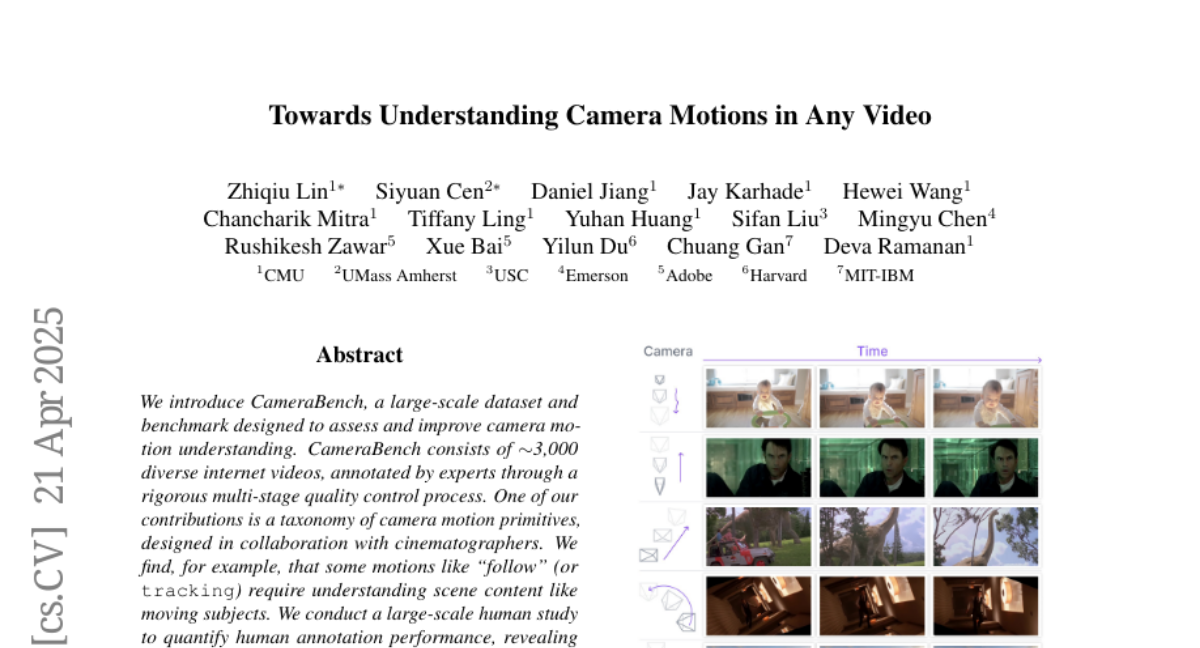

The researchers created a huge collection of videos, along with a detailed system for describing and categorizing different types of camera movements. They also developed a benchmark that lets people test and compare how well AI models can understand these motions, making it easier to see where models are strong or need improvement.

Why it matters?

This matters because better understanding of camera motion helps make smarter robots, more realistic virtual worlds, and improved video editing tools, and it pushes forward research in computer vision and artificial intelligence.

Abstract

CameraBench assesses and enhances camera motion understanding by providing a large-scale dataset, taxonomy, and benchmark for evaluating SfM and VLMs, emphasizing the need for both semantic and geometric information.