TPDiff: Temporal Pyramid Video Diffusion Model

Lingmin Ran, Mike Zheng Shou

2025-03-13

Summary

This paper talks about TPDiff, a smarter way to make AI-generated videos faster and cheaper by building them step-by-step, starting with blurry low-frame-rate versions and gradually adding details until the final smooth video is ready.

What's the problem?

Creating AI videos takes too much computer power because the system tries to handle every frame in full detail from the start, even though early rough versions don’t need that much detail.

What's the solution?

TPDiff breaks the video-making process into stages, using fewer frames at first to save energy and only adding full details at the end, like sketching a cartoon with rough shapes before filling in the final lines and colors.

Why it matters?

This makes AI video tools more accessible for creators by cutting training costs in half and speeding up video generation, helping people make movies, ads, or social media content without needing supercomputers.

Abstract

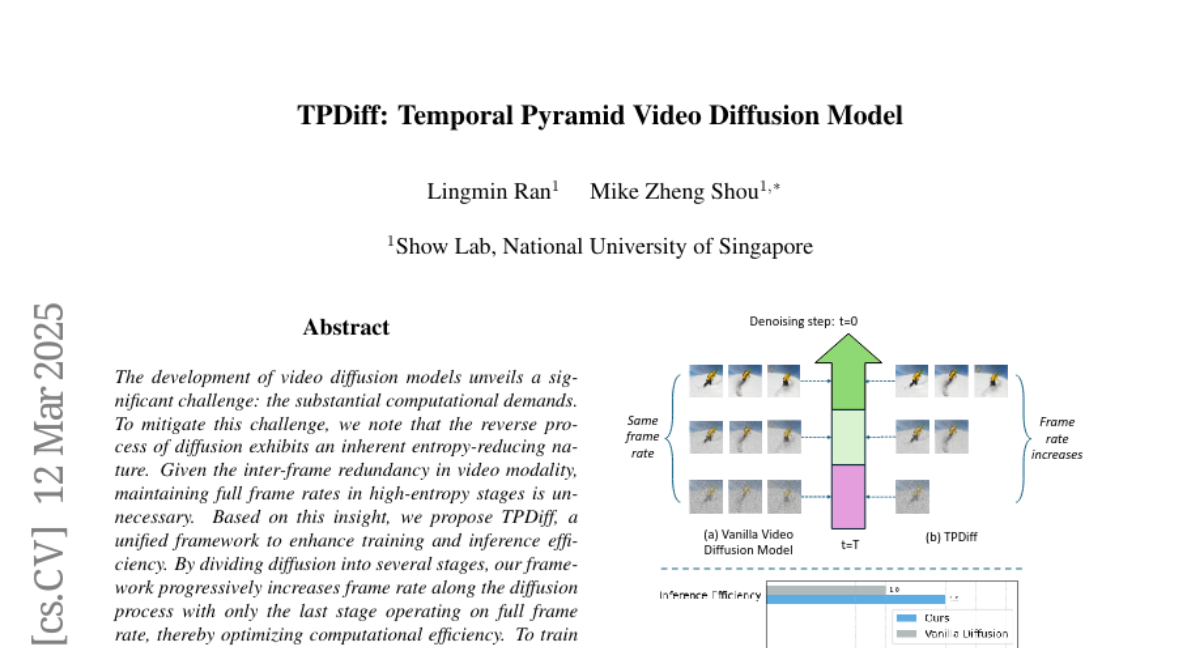

The development of video diffusion models unveils a significant challenge: the substantial computational demands. To mitigate this challenge, we note that the reverse process of diffusion exhibits an inherent entropy-reducing nature. Given the inter-frame redundancy in video modality, maintaining full frame rates in high-entropy stages is unnecessary. Based on this insight, we propose TPDiff, a unified framework to enhance training and inference efficiency. By dividing diffusion into several stages, our framework progressively increases frame rate along the diffusion process with only the last stage operating on full frame rate, thereby optimizing computational efficiency. To train the multi-stage diffusion model, we introduce a dedicated training framework: stage-wise diffusion. By solving the partitioned probability flow ordinary differential equations (ODE) of diffusion under aligned data and noise, our training strategy is applicable to various diffusion forms and further enhances training efficiency. Comprehensive experimental evaluations validate the generality of our method, demonstrating 50% reduction in training cost and 1.5x improvement in inference efficiency.