Tracktention: Leveraging Point Tracking to Attend Videos Faster and Better

Zihang Lai, Andrea Vedaldi

2025-03-28

Summary

This paper explores a new technique to make AI better at understanding videos by focusing on how points in the video move over time.

What's the problem?

It's hard for AI to understand what's happening in a video when objects are moving around a lot.

What's the solution?

The researchers created a new layer for AI models that tracks specific points in the video and uses that information to understand how things are moving.

Why it matters?

This work matters because it can help AI understand videos more accurately, which is important for applications like self-driving cars and video editing.

Abstract

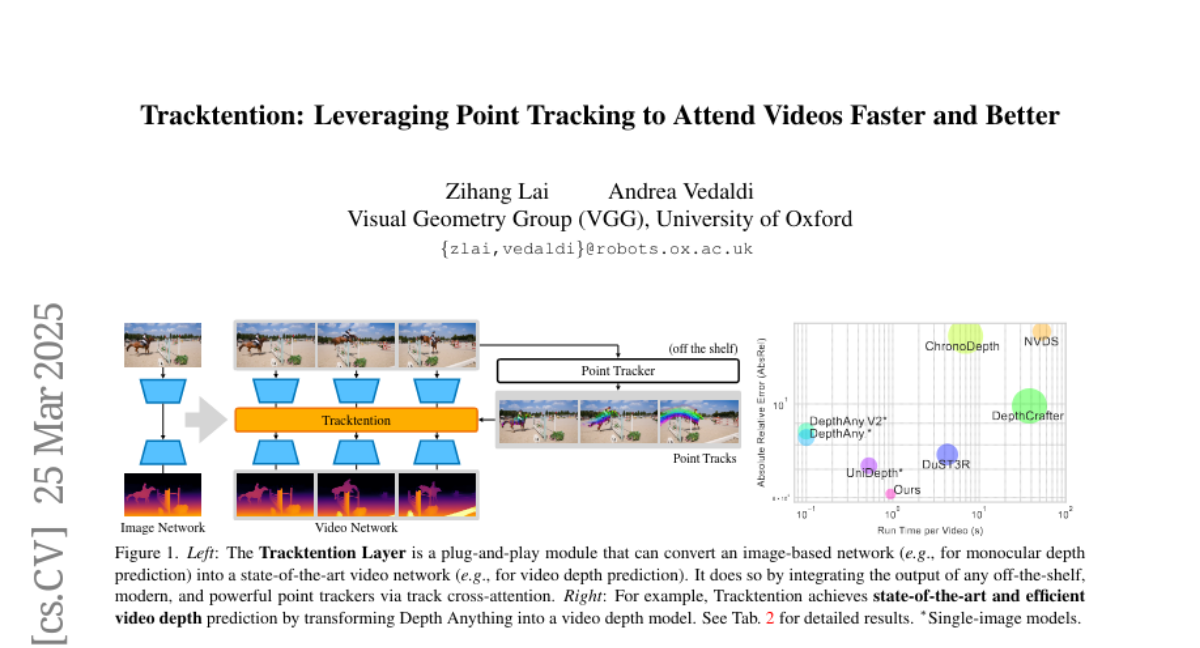

Temporal consistency is critical in video prediction to ensure that outputs are coherent and free of artifacts. Traditional methods, such as temporal attention and 3D convolution, may struggle with significant object motion and may not capture long-range temporal dependencies in dynamic scenes. To address this gap, we propose the Tracktention Layer, a novel architectural component that explicitly integrates motion information using point tracks, i.e., sequences of corresponding points across frames. By incorporating these motion cues, the Tracktention Layer enhances temporal alignment and effectively handles complex object motions, maintaining consistent feature representations over time. Our approach is computationally efficient and can be seamlessly integrated into existing models, such as Vision Transformers, with minimal modification. It can be used to upgrade image-only models to state-of-the-art video ones, sometimes outperforming models natively designed for video prediction. We demonstrate this on video depth prediction and video colorization, where models augmented with the Tracktention Layer exhibit significantly improved temporal consistency compared to baselines.