Training-free Diffusion Acceleration with Bottleneck Sampling

Ye Tian, Xin Xia, Yuxi Ren, Shanchuan Lin, Xing Wang, Xuefeng Xiao, Yunhai Tong, Ling Yang, Bin Cui

2025-03-25

Summary

This paper is about making AI image and video generators faster without sacrificing quality or needing to retrain the AI.

What's the problem?

AI image and video generators can take a long time to create content because they have to perform a lot of complex calculations, especially for high-resolution images and videos.

What's the solution?

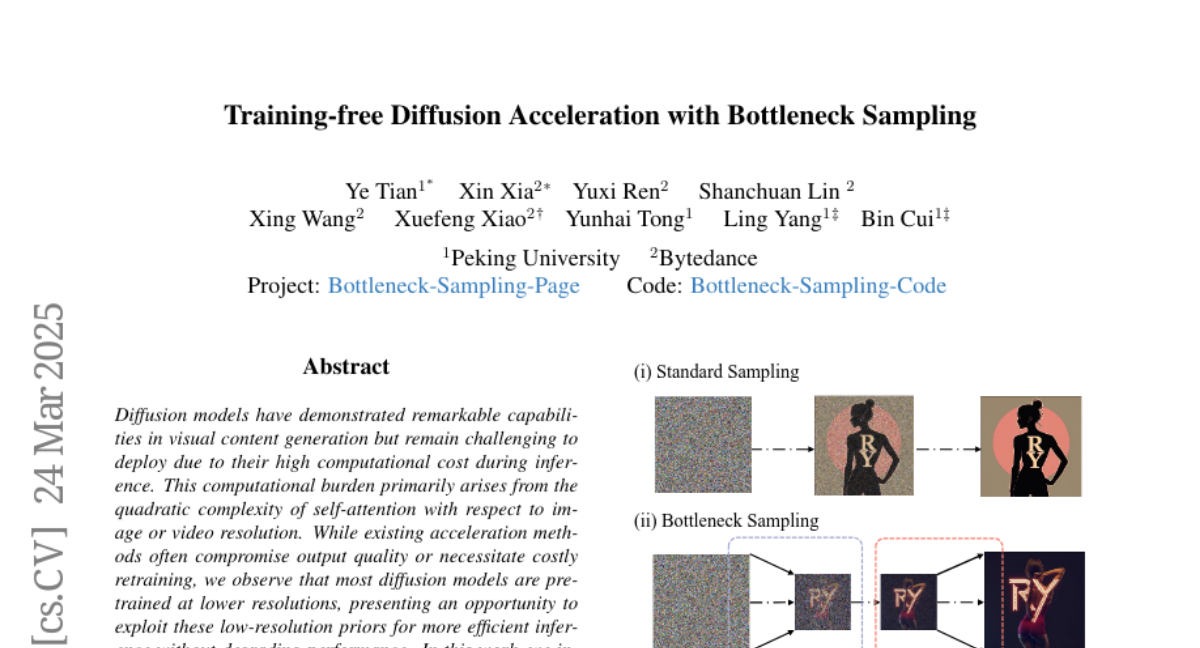

The researchers developed a new technique called Bottleneck Sampling that speeds up the process by doing most of the calculations at a lower resolution and only using the full resolution at the beginning and end.

Why it matters?

This work matters because it can make AI image and video generators more practical and accessible by reducing the time it takes to create content.

Abstract

Diffusion models have demonstrated remarkable capabilities in visual content generation but remain challenging to deploy due to their high computational cost during inference. This computational burden primarily arises from the quadratic complexity of self-attention with respect to image or video resolution. While existing acceleration methods often compromise output quality or necessitate costly retraining, we observe that most diffusion models are pre-trained at lower resolutions, presenting an opportunity to exploit these low-resolution priors for more efficient inference without degrading performance. In this work, we introduce Bottleneck Sampling, a training-free framework that leverages low-resolution priors to reduce computational overhead while preserving output fidelity. Bottleneck Sampling follows a high-low-high denoising workflow: it performs high-resolution denoising in the initial and final stages while operating at lower resolutions in intermediate steps. To mitigate aliasing and blurring artifacts, we further refine the resolution transition points and adaptively shift the denoising timesteps at each stage. We evaluate Bottleneck Sampling on both image and video generation tasks, where extensive experiments demonstrate that it accelerates inference by up to 3times for image generation and 2.5times for video generation, all while maintaining output quality comparable to the standard full-resolution sampling process across multiple evaluation metrics. Code is available at: https://github.com/tyfeld/Bottleneck-Sampling