TULIP: Towards Unified Language-Image Pretraining

Zineng Tang, Long Lian, Seun Eisape, XuDong Wang, Roei Herzig, Adam Yala, Alane Suhr, Trevor Darrell, David M. Chan

2025-03-20

Summary

This paper introduces TULIP, a new AI model that aims to improve how well AI understands both images and language, especially for tasks that require a deep understanding of images.

What's the problem?

Existing AI models often struggle to balance understanding language and understanding images, leading to problems with tasks like counting objects in an image or recognizing fine details.

What's the solution?

The researchers developed TULIP, which uses new techniques to train the AI model to learn both visual features and language alignment at the same time.

Why it matters?

This work is important because it can lead to AI systems that are better at understanding the world around them, which is useful for applications like image recognition, robotics, and self-driving cars.

Abstract

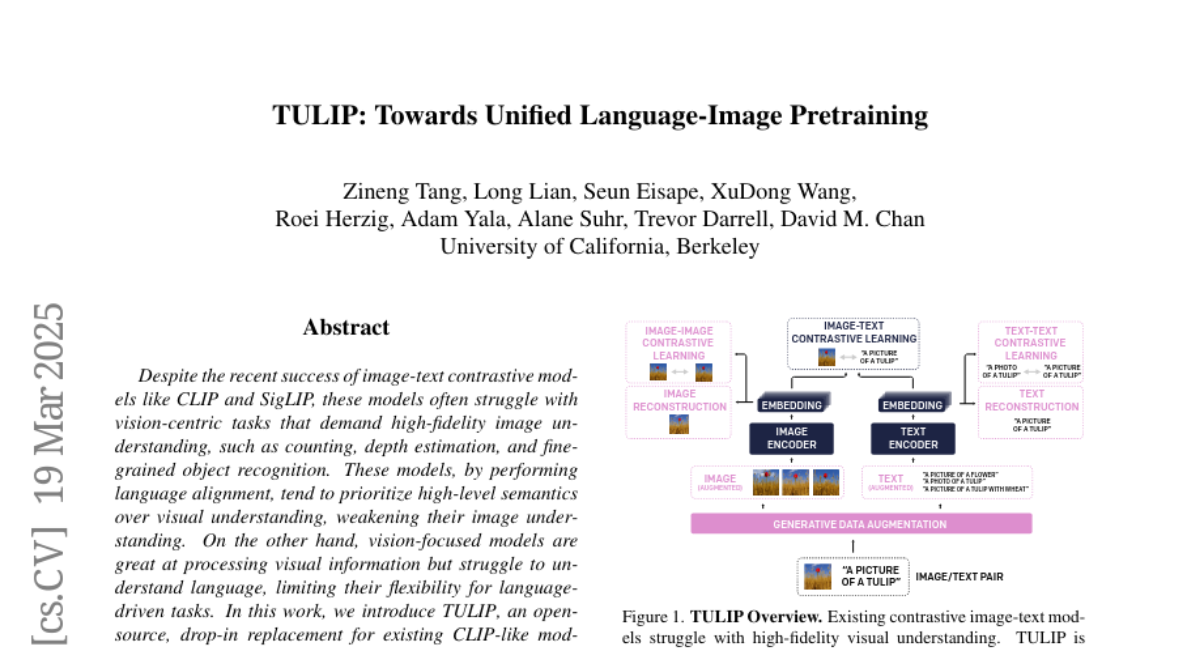

Despite the recent success of image-text contrastive models like CLIP and SigLIP, these models often struggle with vision-centric tasks that demand high-fidelity image understanding, such as counting, depth estimation, and fine-grained object recognition. These models, by performing language alignment, tend to prioritize high-level semantics over visual understanding, weakening their image understanding. On the other hand, vision-focused models are great at processing visual information but struggle to understand language, limiting their flexibility for language-driven tasks. In this work, we introduce TULIP, an open-source, drop-in replacement for existing CLIP-like models. Our method leverages generative data augmentation, enhanced image-image and text-text contrastive learning, and image/text reconstruction regularization to learn fine-grained visual features while preserving global semantic alignment. Our approach, scaling to over 1B parameters, outperforms existing state-of-the-art (SOTA) models across multiple benchmarks, establishing a new SOTA zero-shot performance on ImageNet-1K, delivering up to a 2times enhancement over SigLIP on RxRx1 in linear probing for few-shot classification, and improving vision-language models, achieving over 3times higher scores than SigLIP on MMVP. Our code/checkpoints are available at https://tulip-berkeley.github.io