Two Experts Are All You Need for Steering Thinking: Reinforcing Cognitive Effort in MoE Reasoning Models Without Additional Training

Mengru Wang, Xingyu Chen, Yue Wang, Zhiwei He, Jiahao Xu, Tian Liang, Qiuzhi Liu, Yunzhi Yao, Wenxuan Wang, Ruotian Ma, Haitao Mi, Ningyu Zhang, Zhaopeng Tu, Xiaolong Li, Dong Yu

2025-05-21

Summary

This paper talks about a method called Reinforcing Cognitive Experts (RICE), which helps AI models that use a team of specialized mini-models, called Mixture-of-Experts, to think more clearly and efficiently without needing extra training.

What's the problem?

The problem is that even though Mixture-of-Experts models are designed to use the right expert for each part of a problem, they often don't pick the best ones for reasoning tasks, which can make their answers less accurate or slower.

What's the solution?

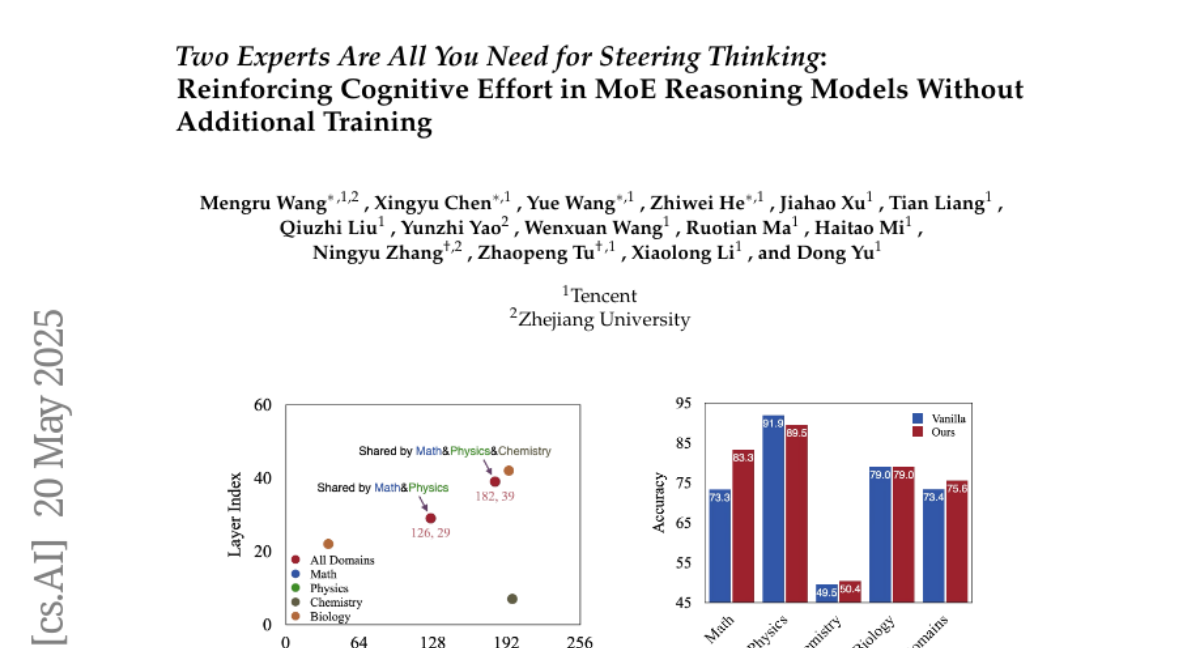

To solve this, the researchers developed RICE, a way for the model to automatically find and use the two best cognitive experts for each reasoning problem, boosting both the quality and speed of its thinking without having to retrain the model or use complicated rules.

Why it matters?

This matters because it makes advanced AI models smarter and faster at solving complex problems, which is useful for everything from homework help to scientific research and beyond.

Abstract

Reinforcing Cognitive Experts (RICE) improves reasoning performance and efficiency in Mixture-of-Experts architectures by identifying and utilizing specialized cognitive experts without requiring additional training or complex heuristics.