Ultra-Resolution Adaptation with Ease

Ruonan Yu, Songhua Liu, Zhenxiong Tan, Xinchao Wang

2025-03-21

Summary

This paper explores how to train AI models to generate really high-resolution images, even when you don't have a lot of training data or computing power.

What's the problem?

Training AI models to make high-resolution images is difficult because it requires a lot of data and computational resources.

What's the solution?

The researchers came up with a set of guidelines, called URAE, that help make the training process more efficient, using synthetic data and tuning specific parts of the model.

Why it matters?

This work matters because it makes it easier to create AI models that can generate detailed, high-resolution images, even with limited resources.

Abstract

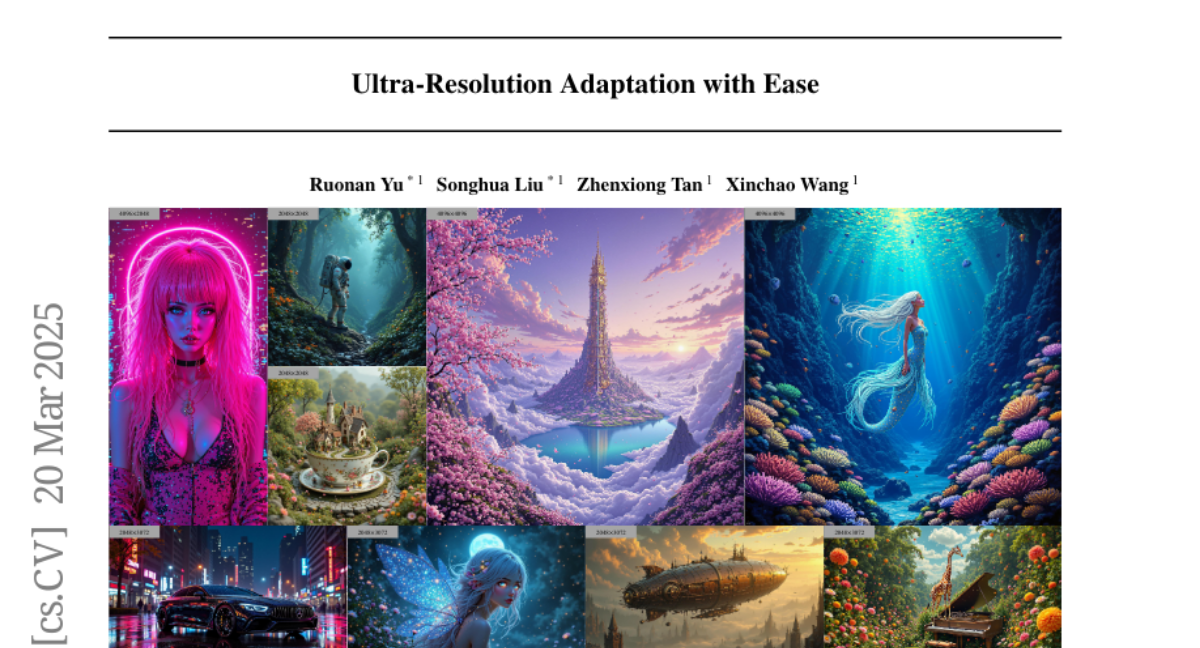

Text-to-image diffusion models have achieved remarkable progress in recent years. However, training models for high-resolution image generation remains challenging, particularly when training data and computational resources are limited. In this paper, we explore this practical problem from two key perspectives: data and parameter efficiency, and propose a set of key guidelines for ultra-resolution adaptation termed URAE. For data efficiency, we theoretically and empirically demonstrate that synthetic data generated by some teacher models can significantly promote training convergence. For parameter efficiency, we find that tuning minor components of the weight matrices outperforms widely-used low-rank adapters when synthetic data are unavailable, offering substantial performance gains while maintaining efficiency. Additionally, for models leveraging guidance distillation, such as FLUX, we show that disabling classifier-free guidance, i.e., setting the guidance scale to 1 during adaptation, is crucial for satisfactory performance. Extensive experiments validate that URAE achieves comparable 2K-generation performance to state-of-the-art closed-source models like FLUX1.1 [Pro] Ultra with only 3K samples and 2K iterations, while setting new benchmarks for 4K-resolution generation. Codes are available https://github.com/Huage001/URAE{here}.