Unconditional Priors Matter! Improving Conditional Generation of Fine-Tuned Diffusion Models

Prin Phunyaphibarn, Phillip Y. Lee, Jaihoon Kim, Minhyuk Sung

2025-03-27

Summary

This paper is about improving the quality of images and videos generated by AI diffusion models by focusing on the 'unconditional' part of the process.

What's the problem?

AI models often struggle to generate high-quality images and videos because the 'unconditional' part, which is supposed to guide the generation process, isn't very good.

What's the solution?

The researchers found that simply replacing the 'unconditional' part with a better one from another AI model can significantly improve the quality of the generated images and videos.

Why it matters?

This work matters because it offers a simple way to improve the quality of AI-generated images and videos, which has implications for various applications, including art, entertainment, and design.

Abstract

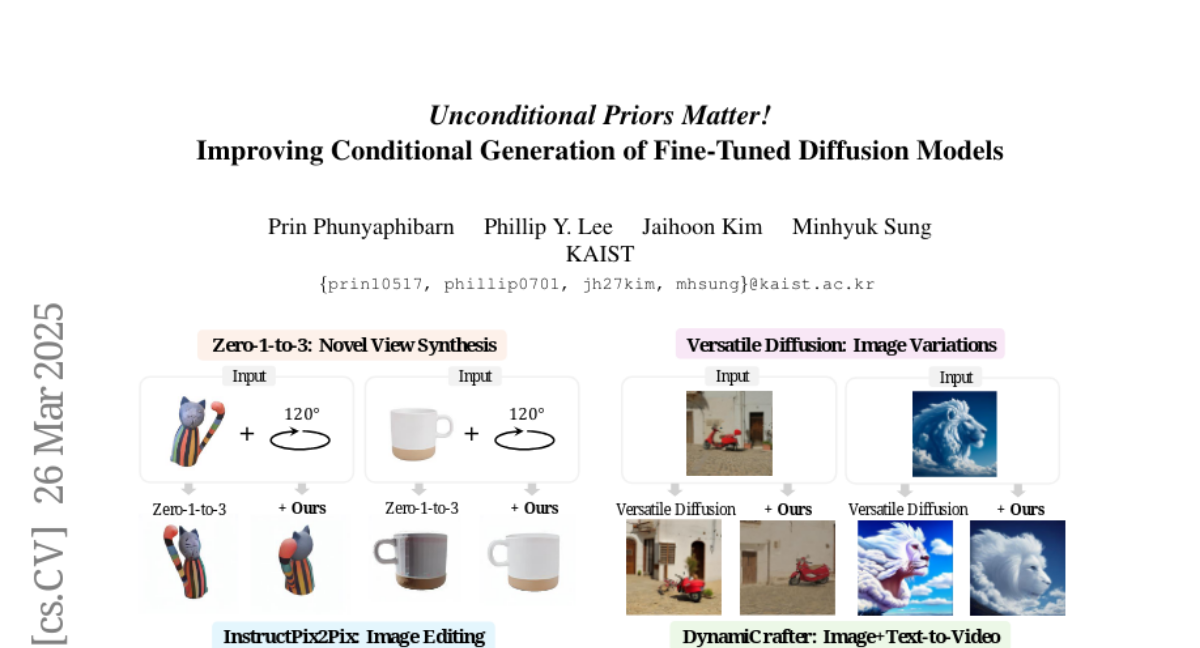

Classifier-Free Guidance (CFG) is a fundamental technique in training conditional diffusion models. The common practice for CFG-based training is to use a single network to learn both conditional and unconditional noise prediction, with a small dropout rate for conditioning. However, we observe that the joint learning of unconditional noise with limited bandwidth in training results in poor priors for the unconditional case. More importantly, these poor unconditional noise predictions become a serious reason for degrading the quality of conditional generation. Inspired by the fact that most CFG-based conditional models are trained by fine-tuning a base model with better unconditional generation, we first show that simply replacing the unconditional noise in CFG with that predicted by the base model can significantly improve conditional generation. Furthermore, we show that a diffusion model other than the one the fine-tuned model was trained on can be used for unconditional noise replacement. We experimentally verify our claim with a range of CFG-based conditional models for both image and video generation, including Zero-1-to-3, Versatile Diffusion, DiT, DynamiCrafter, and InstructPix2Pix.