Unified Multimodal Chain-of-Thought Reward Model through Reinforcement Fine-Tuning

Yibin Wang, Zhimin Li, Yuhang Zang, Chunyu Wang, Qinglin Lu, Cheng Jin, Jiaqi Wang

2025-05-07

Summary

This paper talks about a new AI model that can judge and learn from both images and text by following long chains of reasoning, making it better at understanding and rewarding complex tasks.

What's the problem?

AI often struggles to accurately evaluate tasks that involve both pictures and words, especially when the reasoning needed is complicated and involves many steps, which can lead to unreliable or less accurate results.

What's the solution?

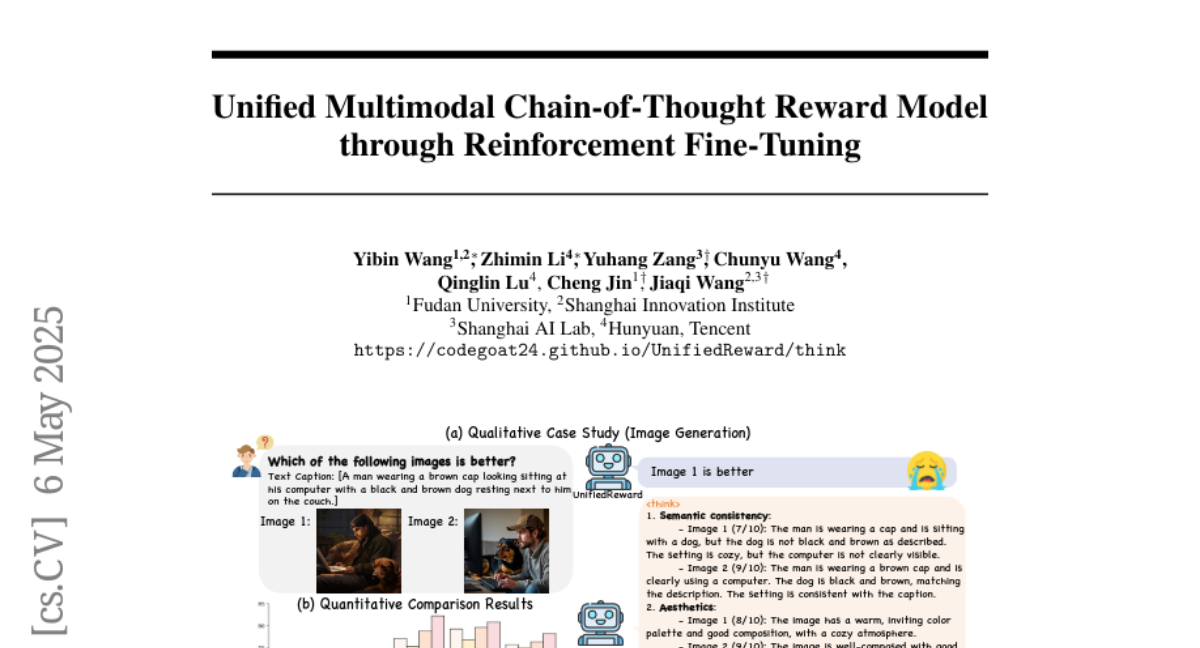

The researchers created a reward model that uses reinforcement learning to explore and learn from long, step-by-step thought processes, helping the AI become more reliable and precise when handling tasks that mix vision and language.

Why it matters?

This matters because it helps AI become smarter and more trustworthy in real-world applications, like helping with creative projects, analyzing content, or assisting in situations where both images and text need to be understood together.

Abstract

A unified multimodal reward model that incorporates long chains of thought reasoning improves reliability and accuracy in vision reward tasks through exploration-driven reinforcement learning.