UniGeo: Taming Video Diffusion for Unified Consistent Geometry Estimation

Yang-Tian Sun, Xin Yu, Zehuan Huang, Yi-Hua Huang, Yuan-Chen Guo, Ziyi Yang, Yan-Pei Cao, Xiaojuan Qi

2025-06-02

Summary

This paper talks about UniGeo, a new approach that helps AI models generate videos where the shapes and structures of objects stay consistent and realistic from one frame to the next.

What's the problem?

The problem is that when AI creates videos, it often struggles to keep the geometry, or the overall shape and position of objects, consistent throughout the video, which can make the results look weird or unnatural.

What's the solution?

The researchers used video diffusion models that learn from patterns across all frames in a video, allowing the AI to better understand and maintain the same geometric features throughout. By training the model to focus on shared attributes between frames, they made sure the objects in the videos look stable and accurate as the video plays.

Why it matters?

This is important because it makes AI-generated videos look much more realistic and trustworthy, which is useful for movies, video games, virtual reality, and any technology that relies on believable video content.

Abstract

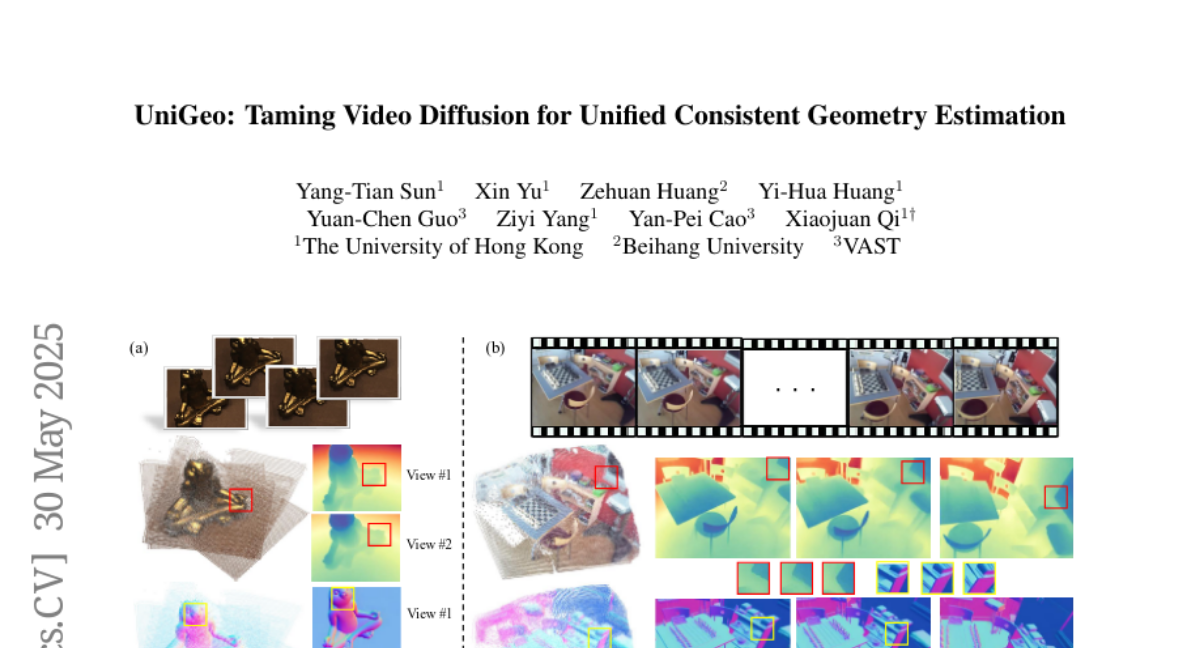

Video generation models leveraging diffusion priors achieve superior global geometric attribute estimation and reconstructions, benefiting from inter-frame consistency and joint training on shared attributes.