UniGoal: Towards Universal Zero-shot Goal-oriented Navigation

Hang Yin, Xiuwei Xu, Lingqing Zhao, Ziwei Wang, Jie Zhou, Jiwen Lu

2025-03-14

Summary

This paper presents UniGoal, a new AI system designed to navigate towards goals in a variety of situations without needing specific training for each one.

What's the problem?

Existing AI navigation systems are usually designed for specific tasks and don't work well when the goal changes. They struggle to understand different types of goals, like finding an object, matching an image, or following a text description, all within the same system.

What's the solution?

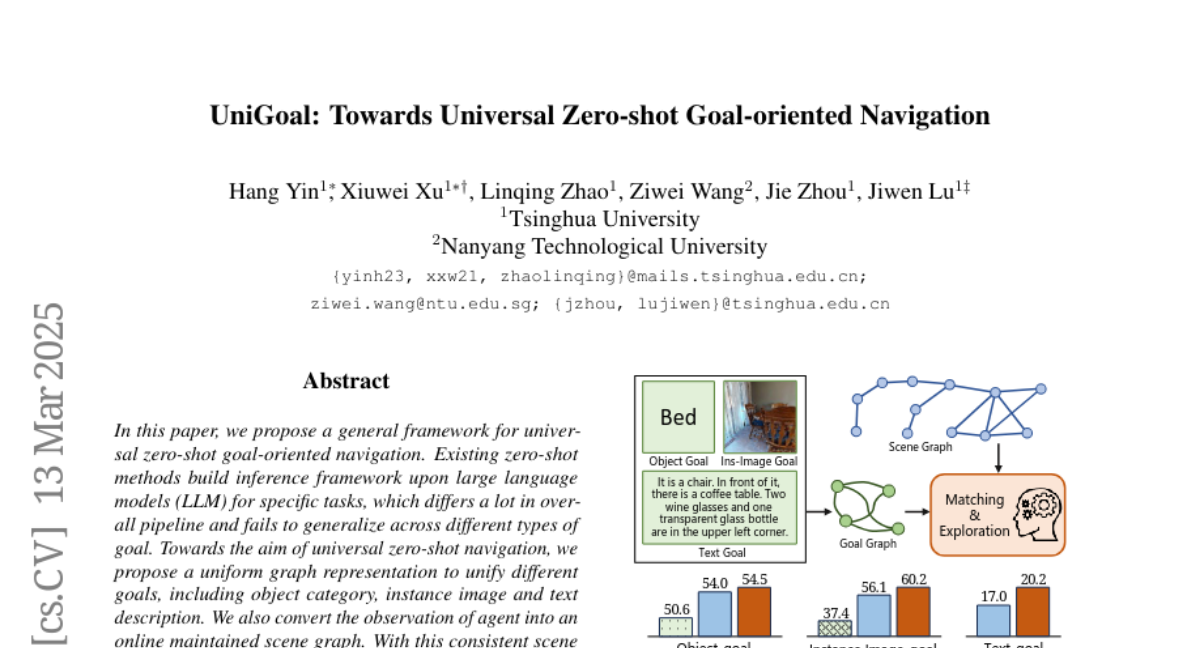

UniGoal uses a uniform way to represent different goals as graphs, which are like visual maps of relationships. It also creates a scene graph of the environment the AI is navigating in. By matching these graphs, UniGoal can figure out how to reach the goal, even if it's something it's never seen before.

Why it matters?

This work matters because it creates a more flexible and general-purpose navigation system that can adapt to different goals without needing retraining. This could be useful in robots that need to perform various tasks in new environments.

Abstract

In this paper, we propose a general framework for universal zero-shot goal-oriented navigation. Existing zero-shot methods build inference framework upon large language models (LLM) for specific tasks, which differs a lot in overall pipeline and fails to generalize across different types of goal. Towards the aim of universal zero-shot navigation, we propose a uniform graph representation to unify different goals, including object category, instance image and text description. We also convert the observation of agent into an online maintained scene graph. With this consistent scene and goal representation, we preserve most structural information compared with pure text and are able to leverage LLM for explicit graph-based reasoning. Specifically, we conduct graph matching between the scene graph and goal graph at each time instant and propose different strategies to generate long-term goal of exploration according to different matching states. The agent first iteratively searches subgraph of goal when zero-matched. With partial matching, the agent then utilizes coordinate projection and anchor pair alignment to infer the goal location. Finally scene graph correction and goal verification are applied for perfect matching. We also present a blacklist mechanism to enable robust switch between stages. Extensive experiments on several benchmarks show that our UniGoal achieves state-of-the-art zero-shot performance on three studied navigation tasks with a single model, even outperforming task-specific zero-shot methods and supervised universal methods.