Unstructured Evidence Attribution for Long Context Query Focused Summarization

Dustin Wright, Zain Muhammad Mujahid, Lu Wang, Isabelle Augenstein, David Jurgens

2025-02-21

Summary

This paper talks about improving how AI generates summaries from long texts by teaching it to properly cite evidence from all parts of the text, not just the beginning or end.

What's the problem?

AI models often struggle to summarize long texts accurately because they tend to focus more on information at the start or end of the text, ignoring important details in the middle. This makes their summaries less reliable and harder to trust, especially when they fail to show where their information comes from.

What's the solution?

The researchers created a new dataset called SUnsET, which is designed to train AI models to find and use evidence from all parts of a long text. They showed that using this dataset helps AI generate summaries that are more accurate and include proper citations for the evidence used. This method also reduces the bias toward certain parts of the text, making the summaries more balanced.

Why it matters?

This matters because it makes AI-generated summaries more trustworthy and useful, especially for tasks like research or decision-making where knowing the source of information is crucial. By improving how AI handles long texts, this work helps create tools that are better at processing complex documents and providing reliable summaries.

Abstract

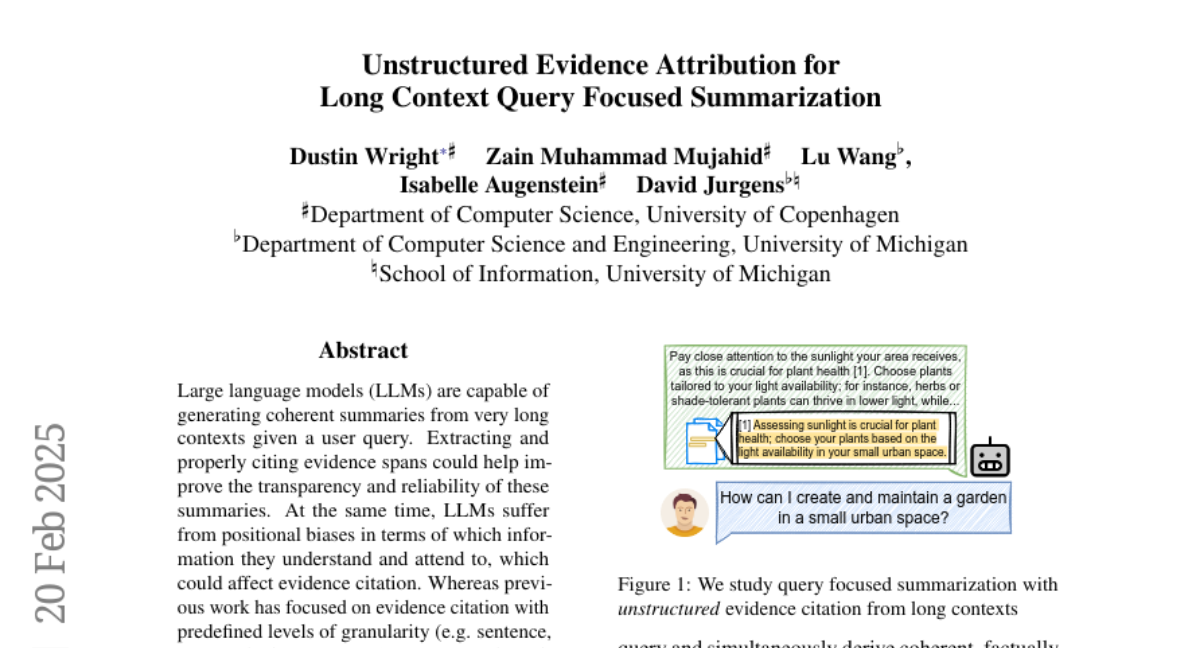

Large language models (LLMs) are capable of generating coherent summaries from very long contexts given a user query. Extracting and properly citing evidence spans could help improve the transparency and reliability of these summaries. At the same time, LLMs suffer from positional biases in terms of which information they understand and attend to, which could affect evidence citation. Whereas previous work has focused on evidence citation with predefined levels of granularity (e.g. sentence, paragraph, document, etc.), we propose the task of long-context query focused summarization with unstructured evidence citation. We show how existing systems struggle to generate and properly cite unstructured evidence from their context, and that evidence tends to be "lost-in-the-middle". To help mitigate this, we create the Summaries with Unstructured Evidence Text dataset (SUnsET), a synthetic dataset generated using a novel domain-agnostic pipeline which can be used as supervision to adapt LLMs to this task. We demonstrate across 5 LLMs of different sizes and 4 datasets with varying document types and lengths that LLMs adapted with SUnsET data generate more relevant and factually consistent evidence than their base models, extract evidence from more diverse locations in their context, and can generate more relevant and consistent summaries.