Unsupervised Word-level Quality Estimation for Machine Translation Through the Lens of Annotators (Dis)agreement

Gabriele Sarti, Vilém Zouhar, Malvina Nissim, Arianna Bisazza

2025-05-30

Summary

This paper talks about new ways to check how good each word in a machine-translated sentence is, especially by looking at how much human experts agree or disagree on what counts as a mistake.

What's the problem?

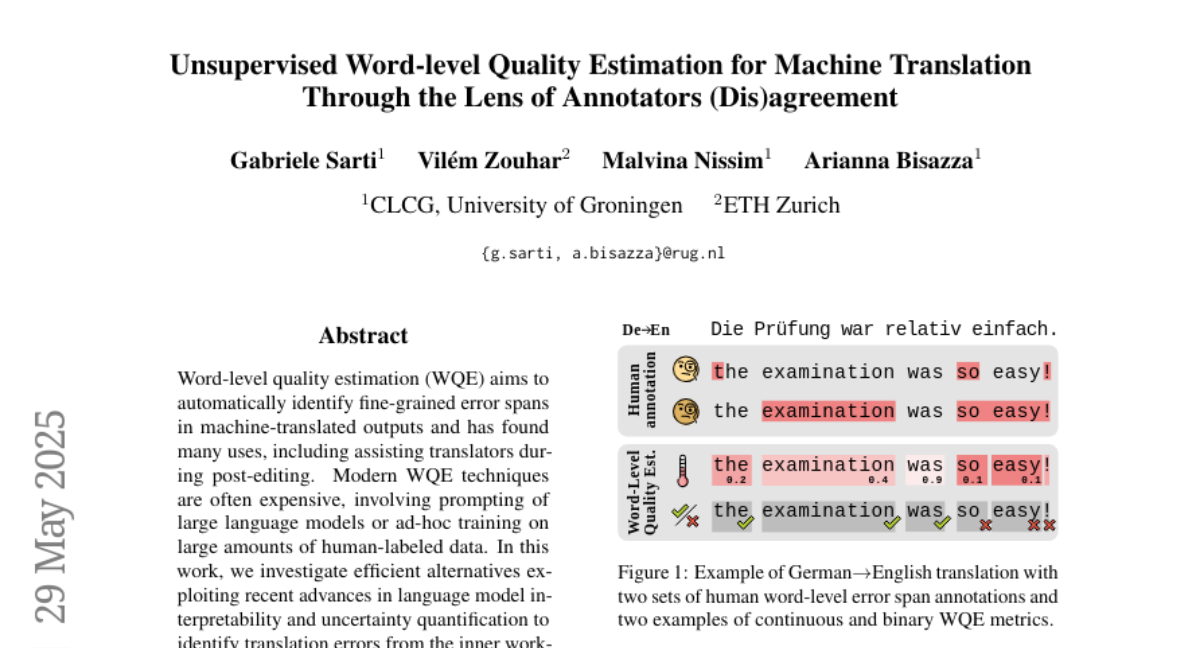

The problem is that machine translation often makes small errors at the word level, and it's hard to judge these mistakes automatically, especially since even human reviewers sometimes disagree on what is right or wrong.

What's the solution?

The researchers studied different techniques for estimating word-level translation quality, including both supervised (trained with labeled data) and unsupervised (not trained with labeled data) methods. They focused on how well these methods can spot errors, how understandable their decisions are, and how much uncertainty or disagreement there is among human judges.

Why it matters?

This is important because better ways to spot translation mistakes can make automatic translations more accurate and trustworthy, which helps people communicate better across languages and cultures.

Abstract

Evaluation of word-level quality estimation techniques leverages model interpretability and uncertainty quantification to identify translation errors with a focus on the impact of label variation and the performance of supervised versus unsupervised metrics.