Unveiling Instruction-Specific Neurons & Experts: An Analytical Framework for LLM's Instruction-Following Capabilities

Junyan Zhang, Yubo Gao, Yibo Yan, Jungang Li, Zhaorui Hou, Sicheng Tao, Shuliang Liu, Song Dai, Yonghua Hei, Junzhuo Li, Xuming Hu

2025-05-29

Summary

This paper talks about how researchers are trying to figure out which parts of a large language model are responsible for following instructions, by looking closely at the tiny pieces inside the model that get activated for certain tasks.

What's the problem?

The problem is that while large language models can follow instructions pretty well, it's not clear exactly how they do it or which parts of the model are actually making it happen. Without this understanding, it's hard to improve the models or fix them if they make mistakes.

What's the solution?

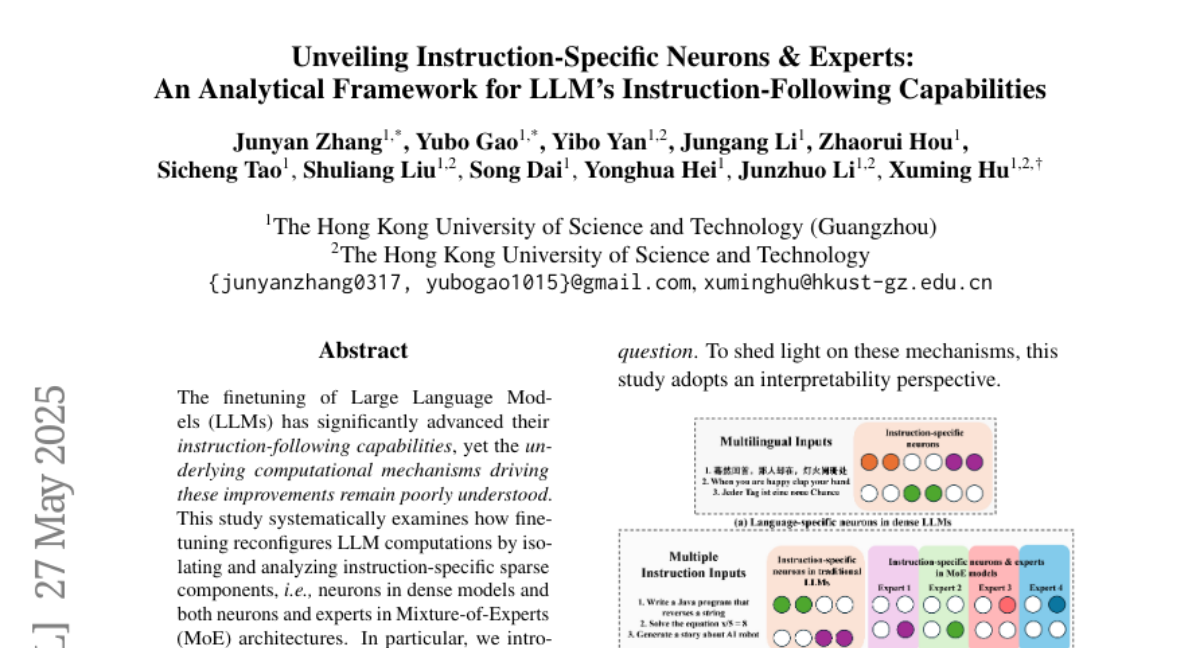

The researchers developed new tools and methods, called HexaInst and SPARCOM, that let them analyze the model in detail. They focused on finding the specific neurons and groups inside the model that are triggered by different types of instructions, helping to reveal how instruction-following really works.

Why it matters?

This is important because by understanding which parts of the model handle instructions, scientists can make these AI systems smarter, more reliable, and easier to control. It also helps with making the models safer and more transparent, so people can trust how they work.

Abstract

The study investigates the role of sparse computational components in the instruction-following capabilities of Large Language Models through systematic analysis and introduces HexaInst and SPARCOM for better understanding.