UPME: An Unsupervised Peer Review Framework for Multimodal Large Language Model Evaluation

Qihui Zhang, Munan Ning, Zheyuan Liu, Yanbo Wang, Jiayi Ye, Yue Huang, Shuo Yang, Xiao Chen, Yibing Song, Li Yuan

2025-04-01

Summary

This paper is about a new way to automatically evaluate AI models that understand both images and text, without needing humans to create questions and answers.

What's the problem?

It's hard to evaluate these AI models because it takes a lot of work to create questions and answers for images, and existing automatic methods can be biased.

What's the solution?

The researchers developed a system where AI models automatically generate questions and review each other's answers, reducing the need for human input and minimizing bias.

Why it matters?

This work matters because it can make it easier to evaluate and improve AI models that understand both images and text, leading to better performance.

Abstract

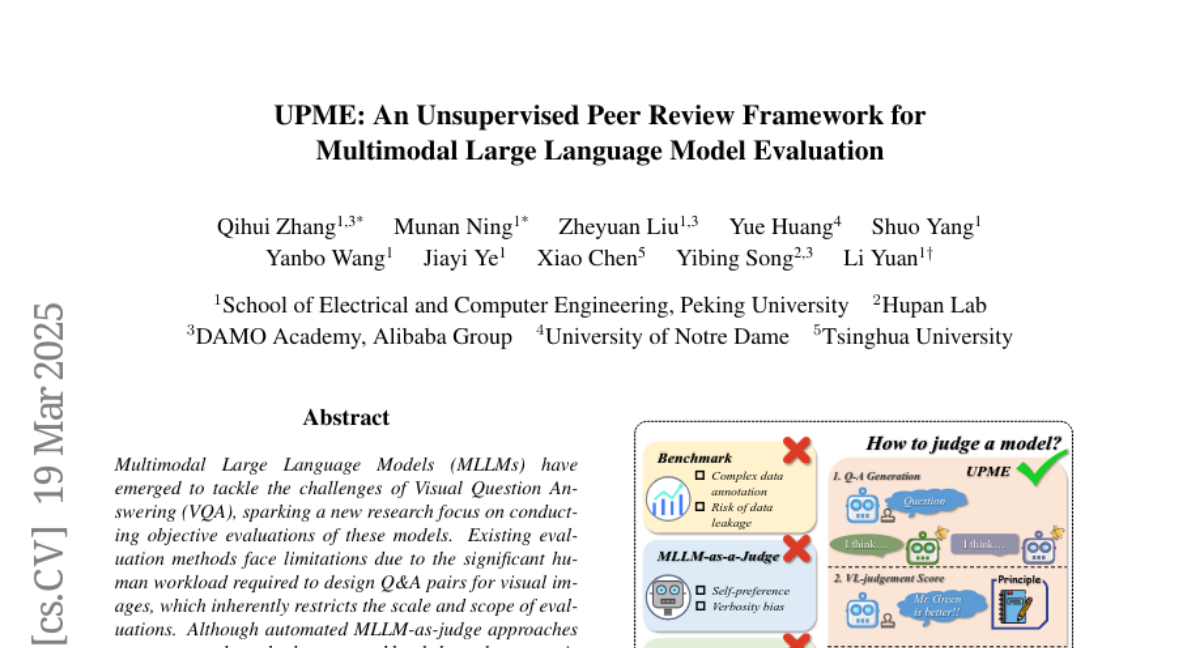

Multimodal Large Language Models (MLLMs) have emerged to tackle the challenges of Visual Question Answering (VQA), sparking a new research focus on conducting objective evaluations of these models. Existing evaluation methods face limitations due to the significant human workload required to design Q&A pairs for visual images, which inherently restricts the scale and scope of evaluations. Although automated MLLM-as-judge approaches attempt to reduce the human workload through automatic evaluations, they often introduce biases. To address these problems, we propose an Unsupervised Peer review MLLM Evaluation framework. It utilizes only image data, allowing models to automatically generate questions and conduct peer review assessments of answers from other models, effectively alleviating the reliance on human workload. Additionally, we introduce the vision-language scoring system to mitigate the bias issues, which focuses on three aspects: (i) response correctness; (ii) visual understanding and reasoning; and (iii) image-text correlation. Experimental results demonstrate that UPME achieves a Pearson correlation of 0.944 with human evaluations on the MMstar dataset and 0.814 on the ScienceQA dataset, indicating that our framework closely aligns with human-designed benchmarks and inherent human preferences.