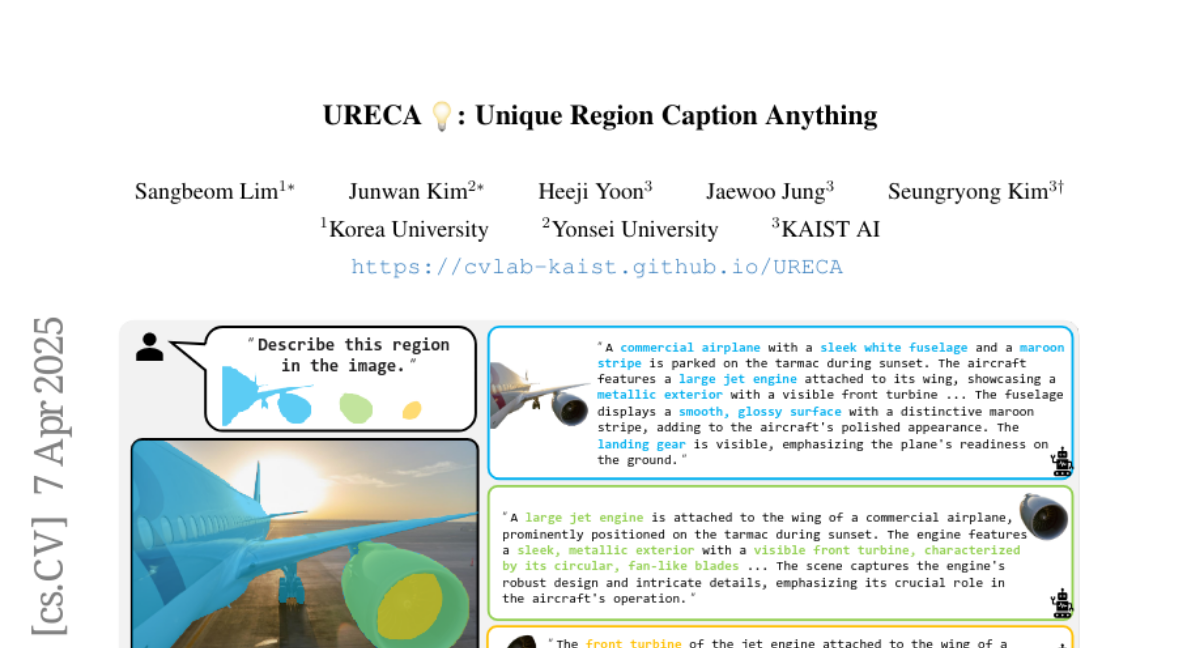

URECA: Unique Region Caption Anything

Sangbeom Lim, Junwan Kim, Heeji Yoon, Jaewoo Jung, Seungryong Kim

2025-04-08

Summary

This paper talks about URECA, a smart AI tool that writes detailed descriptions for specific parts of images, like explaining what's special about a dog's ear or a tree branch in a photo.

What's the problem?

Current AI caption tools give similar descriptions for different parts of images or miss small details, making them less useful for real-world tasks like medical imaging or product descriptions.

What's the solution?

URECA uses a huge training dataset with millions of image parts and a special AI model that keeps track of positions and shapes, helping it write unique captions for everything from tiny details to large objects.

Why it matters?

This helps create better AI tools for doctors analyzing scans, online shopping product tags, and photo editing software that needs precise descriptions of image areas.

Abstract

Region-level captioning aims to generate natural language descriptions for specific image regions while highlighting their distinguishing features. However, existing methods struggle to produce unique captions across multi-granularity, limiting their real-world applicability. To address the need for detailed region-level understanding, we introduce URECA dataset, a large-scale dataset tailored for multi-granularity region captioning. Unlike prior datasets that focus primarily on salient objects, URECA dataset ensures a unique and consistent mapping between regions and captions by incorporating a diverse set of objects, parts, and background elements. Central to this is a stage-wise data curation pipeline, where each stage incrementally refines region selection and caption generation. By leveraging Multimodal Large Language Models (MLLMs) at each stage, our pipeline produces distinctive and contextually grounded captions with improved accuracy and semantic diversity. Building upon this dataset, we present URECA, a novel captioning model designed to effectively encode multi-granularity regions. URECA maintains essential spatial properties such as position and shape through simple yet impactful modifications to existing MLLMs, enabling fine-grained and semantically rich region descriptions. Our approach introduces dynamic mask modeling and a high-resolution mask encoder to enhance caption uniqueness. Experiments show that URECA achieves state-of-the-art performance on URECA dataset and generalizes well to existing region-level captioning benchmarks.