V-MAGE: A Game Evaluation Framework for Assessing Visual-Centric Capabilities in Multimodal Large Language Models

Xiangxi Zheng, Linjie Li, Zhengyuan Yang, Ping Yu, Alex Jinpeng Wang, Rui Yan, Yuan Yao, Lijuan Wang

2025-04-09

Summary

This paper talks about V-MAGE, a video game test suite that checks how well AI models understand and react to moving visuals, like tracking objects or making quick decisions in games.

What's the problem?

Current tests for visual AI are too simple and don’t challenge models with real-world tasks like following fast-moving objects or planning ahead in dynamic scenes.

What's the solution?

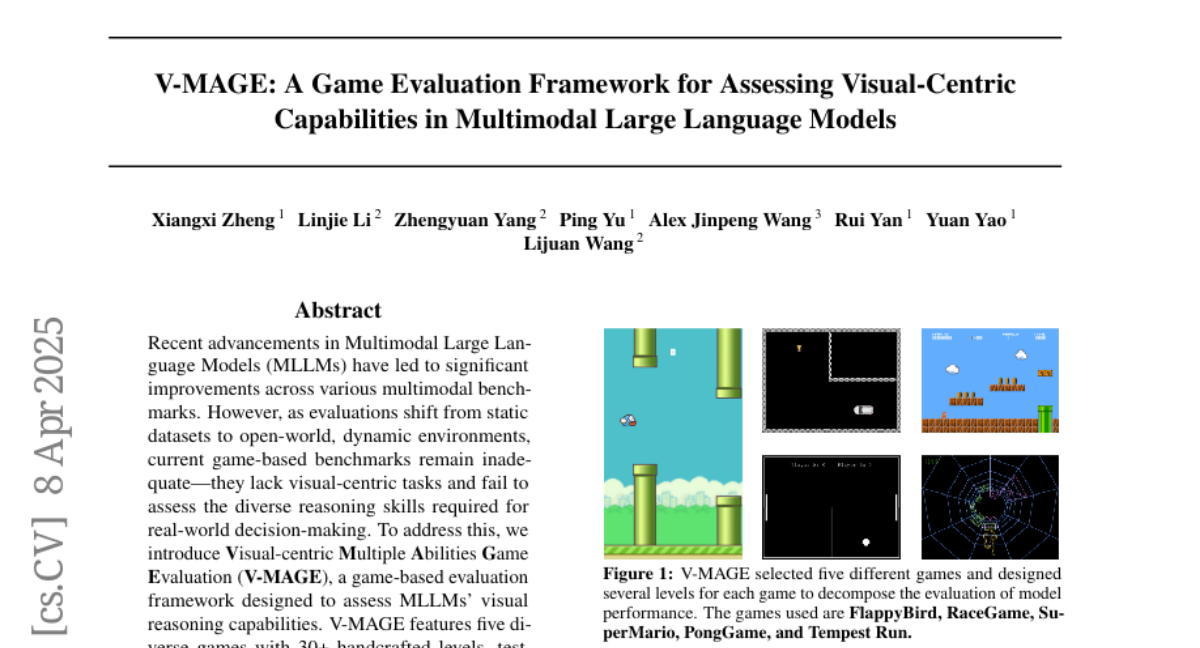

V-MAGE uses five games (like Flappy Bird and racing games) with 30+ levels to test AI on skills like tracking moving targets, timing jumps, and memorizing layouts, revealing where models struggle compared to humans.

Why it matters?

This helps improve AI for real-world tasks like self-driving cars that need sharp visual reflexes or robots that navigate busy environments, by identifying weaknesses in current visual reasoning systems.

Abstract

Recent advancements in Multimodal Large Language Models (MLLMs) have led to significant improvements across various multimodal benchmarks. However, as evaluations shift from static datasets to open-world, dynamic environments, current game-based benchmarks remain inadequate because they lack visual-centric tasks and fail to assess the diverse reasoning skills required for real-world decision-making. To address this, we introduce Visual-centric Multiple Abilities Game Evaluation (V-MAGE), a game-based evaluation framework designed to assess visual reasoning capabilities of MLLMs. V-MAGE features five diverse games with 30+ handcrafted levels, testing models on core visual skills such as positioning, trajectory tracking, timing, and visual memory, alongside higher-level reasoning like long-term planning and deliberation. We use V-MAGE to evaluate leading MLLMs, revealing significant challenges in their visual perception and reasoning. In all game environments, the top-performing MLLMs, as determined by Elo rating comparisons, exhibit a substantial performance gap compared to humans. Our findings highlight critical limitations, including various types of perceptual errors made by the models, and suggest potential avenues for improvement from an agent-centric perspective, such as refining agent strategies and addressing perceptual inaccuracies. Code is available at https://github.com/CSU-JPG/V-MAGE.