V-STaR: Benchmarking Video-LLMs on Video Spatio-Temporal Reasoning

Zixu Cheng, Jian Hu, Ziquan Liu, Chenyang Si, Wei Li, Shaogang Gong

2025-03-18

Summary

This paper introduces V-STaR, a new benchmark to evaluate how well Video Large Language Models (Video-LLMs) can reason about videos, specifically focusing on their understanding of space and time.

What's the problem?

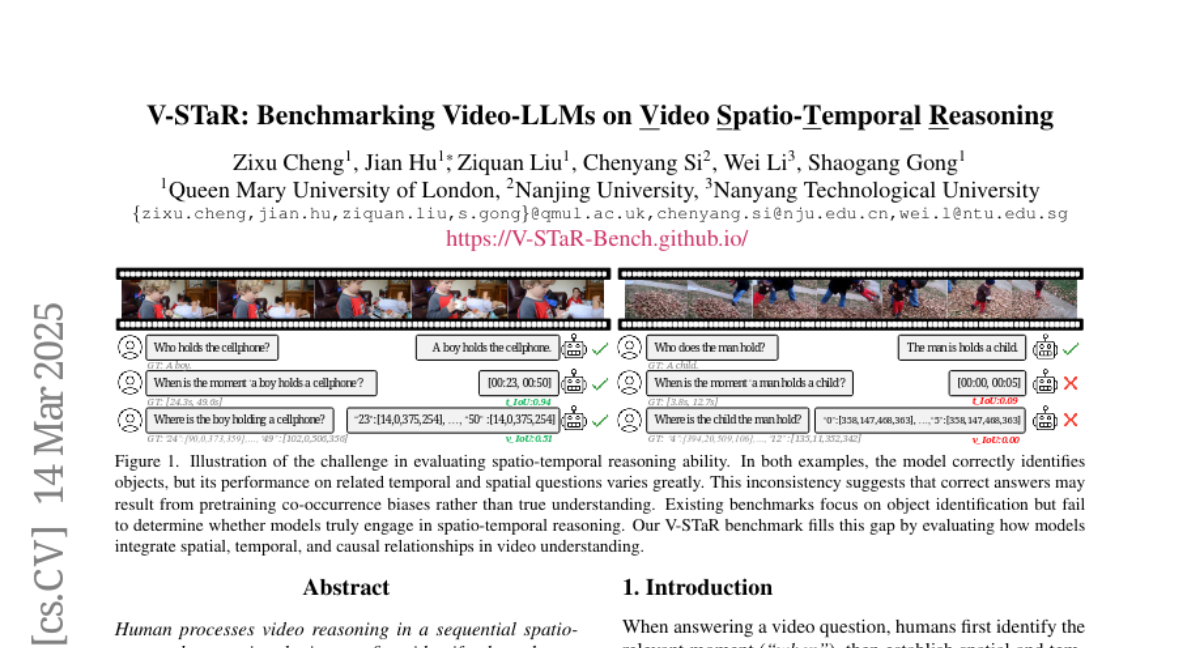

Existing benchmarks for Video-LLMs mainly test if the models can recognize objects in videos but don't assess if they truly understand the relationships between objects and events over time. This makes it hard to know if a model is actually reasoning or just relying on its memory of what usually happens.

What's the solution?

V-STaR decomposes video understanding into a Reverse Spatio-Temporal Reasoning (RSTR) task. This task evaluates the model's ability to identify what objects are present, when events occur, and where they are located, all while capturing the logical steps the model takes to reach its conclusions. A new dataset was created to support this evaluation, designed to mimic human reasoning.

Why it matters?

This work matters because it provides a more thorough way to test Video-LLMs and pushes the field towards developing AI that can truly understand and reason about videos, which is essential for applications like video search, analysis, and creating more intelligent video-based assistants.

Abstract

Human processes video reasoning in a sequential spatio-temporal reasoning logic, we first identify the relevant frames ("when") and then analyse the spatial relationships ("where") between key objects, and finally leverage these relationships to draw inferences ("what"). However, can Video Large Language Models (Video-LLMs) also "reason through a sequential spatio-temporal logic" in videos? Existing Video-LLM benchmarks primarily focus on assessing object presence, neglecting relational reasoning. Consequently, it is difficult to measure whether a model truly comprehends object interactions (actions/events) in videos or merely relies on pre-trained "memory" of co-occurrences as biases in generating answers. In this work, we introduce a Video Spatio-Temporal Reasoning (V-STaR) benchmark to address these shortcomings. The key idea is to decompose video understanding into a Reverse Spatio-Temporal Reasoning (RSTR) task that simultaneously evaluates what objects are present, when events occur, and where they are located while capturing the underlying Chain-of-thought (CoT) logic. To support this evaluation, we construct a dataset to elicit the spatial-temporal reasoning process of Video-LLMs. It contains coarse-to-fine CoT questions generated by a semi-automated GPT-4-powered pipeline, embedding explicit reasoning chains to mimic human cognition. Experiments from 14 Video-LLMs on our V-STaR reveal significant gaps between current Video-LLMs and the needs for robust and consistent spatio-temporal reasoning.