VerIF: Verification Engineering for Reinforcement Learning in Instruction Following

Hao Peng, Yunjia Qi, Xiaozhi Wang, Bin Xu, Lei Hou, Juanzi Li

2025-06-15

Summary

This paper talks about VerIF, a new way to improve reinforcement learning (RL) for AI models that follow instructions. It combines two verification methods: one uses clear, rule-based code to check strict rules, and the other uses large language models to check softer, more complex constraints. This hybrid approach helps the AI learn better and follow instructions more accurately.

What's the problem?

The problem is that teaching AI models to follow many different and sometimes tricky instructions is hard because you need a reliable way to check if the model’s responses actually meet all the rules and requirements. Some rules are easy to check with code, but others need deeper understanding, and existing methods don’t combine both well, limiting performance and generalization.

What's the solution?

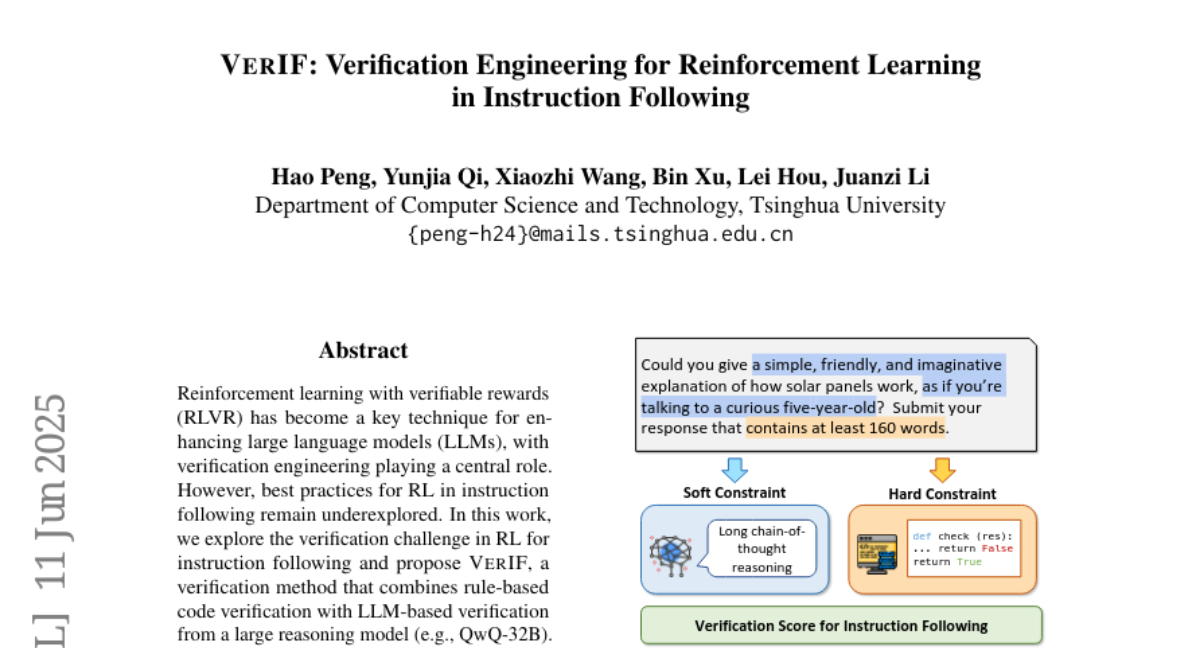

The solution was to create VerIF, which uses rule-based code checking for simple, strict constraints like length or format, and large language models for complex, soft constraints like style or meaning. They built a big dataset with many instructions and verification signals, then used reinforcement learning where the reward comes from this two-part verification process. This improves how well the AI learns to follow instructions while also generalizing to new rules it hasn’t seen before.

Why it matters?

This matters because better verification in instruction-following AI means more accurate and reliable models that can handle many kinds of tasks. It helps AI adapt to complex instructions safely and effectively, improving their usefulness in applications like virtual assistants, customer service, and automated programming.

Abstract

VerIF, a hybrid verification method combining rule-based and LLM-based approaches, enhances instruction-following RL with significant performance improvements and generalization.